Fake news, conspiracy theories and manipulated reporting have been with us since the beginning of time. But in today’s digital world, especially on social media, they are omnipresent and increasingly influencing social values, changing opinions on critical issues and topics and redefining beliefs and facts. This year’s Ars Electronica Festival theme “Who Owns the Truth?” takes a closer look at this problem.

The media communication of truth

In addition to questions of interpretive sovereignty and sovereignty, this year’s focus is on the collective synchronisation of perception. In the dissemination of false information and conspiracy theories, attention is often paid to ensuring that everyone has the same opinion and accepts false information as truth. The aim is to gain control of public opinion by steering the perception of many people in the same direction. Clickbait headlines in particular try to grab attention with shocking statements, even if the truth often falls by the wayside.

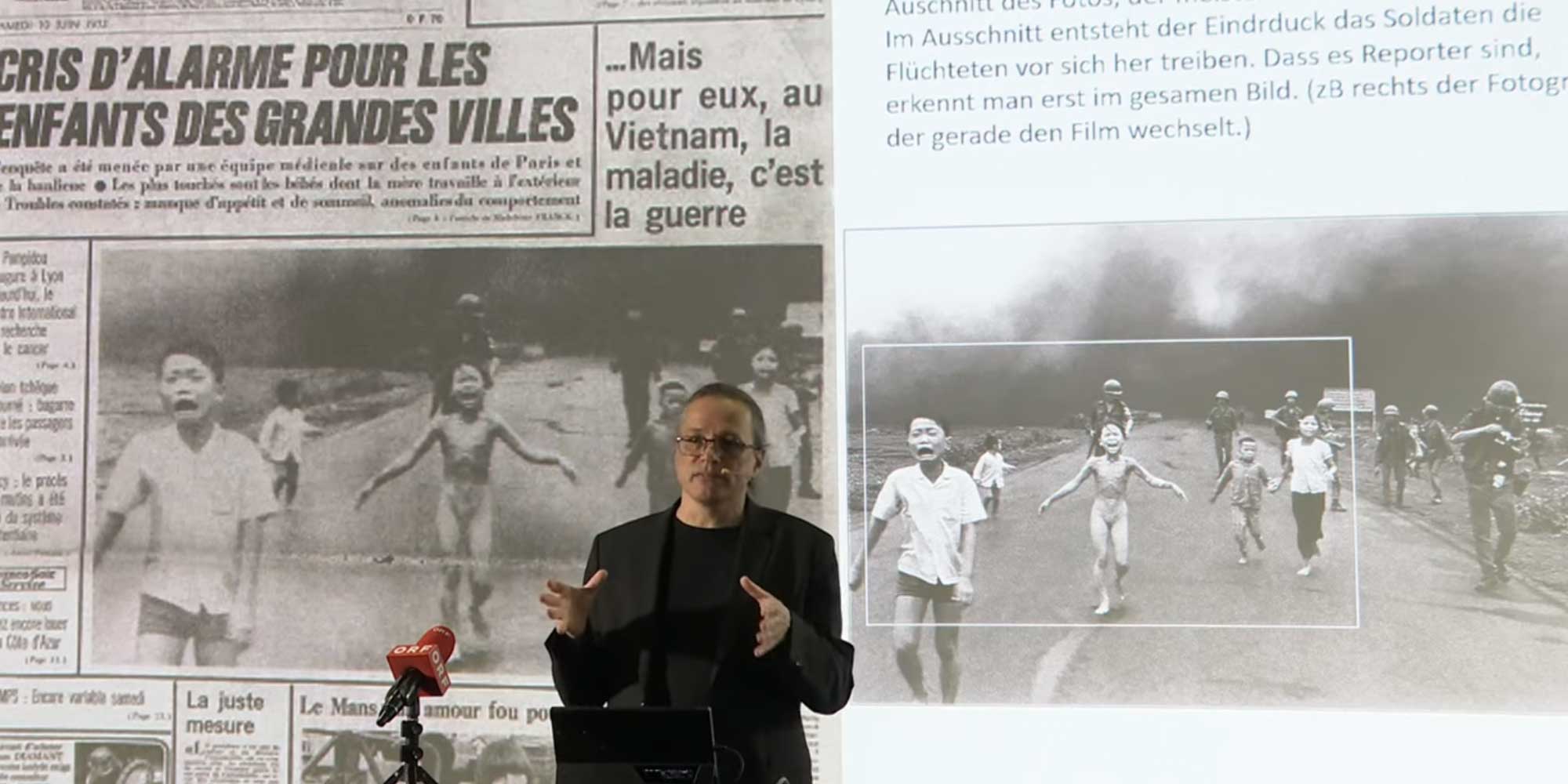

Examples of the impacts of fake news and manipulated reporting range from influencing the scientific basis of man-made climate change to rigging presidential elections. But it is not only in current affairs that fake news and manipulated reporting play a role. Images have also been taken out of their temporal, spatial and contextual context and manipulated in reporting on war and suffering.

A well-known example of this is one of the most widely used war images. The detail of the image gives the impression that locals are being chased by soldiers. Only when you look at the whole picture you realise that the supposed soldiers are not soldiers at all. Such cases of distorted war reporting are not unique but have occurred regularly throughout history.

It is of great importance for our society to be aware of how the media mediation can change our perception of reality. We seek for authenticity and truth in the stories we are told. This is precisely why, in the age of social media and the constant availability of information, we should ask ourselves all the more: Who can we still trust? Who has the authority to interpret the truth? And how can we protect ourselves from fakes and conspiracy theories? In situations where those who have the authority to interpret the truth lie, the question arises as to how our society should react, especially when the lies take place at high political levels. Whistleblowers such as Edward Snowden and Julian Assange have dealt with unpleasant truths and found a way to deal with liars in positions of power and ensure that their unethical behavior does not go unnoticed. But at what cost?

Truth, transparency and responsibility in the use of AI systems

The search for truth is not only important in politics. The question of truth, transparency and freedom of expression also arises when dealing with AI systems. What happens when we let Chat GPT speak? What answers do we get to our questions? What is true and what is false?

It is important to understand that AI systems are not knowledge machines. Chat GPT is not a lexicon, it is a communication tool. It can give us similarities to information that already exists, but it is not programmed to tell the truth. Similar to discussions with friends, assumptions can be made and not always only the “right” and the “truth” is spoken.

As a society, we should be concerned with understanding how AI systems work and what the differences are between a lexicon and a communication machine. It’s not about the technical components behind it, it’s about understanding how the data comes together. Developers have a responsibility to be transparent and to communicate their processes to the rest of society.

„In Europe, we have successfully mandated that all companies provide information on the exact amount of each substance contained in every product sold in supermarkets. If this requirement can be met without causing businesses to fail, it should also be possible to mandate that AI systems be transparent about the methods used to generate their data results.“

Gerfried Stocker, artistic director Ars Electronica

Another important aspect in this context is the so-called kill switch. Many people fear that AI systems will take the “power” out of our hands. Out of this fear, we are looking at whether or who needs a kill switch and who is responsible for controlling it. Is it the AI systems themselves? Is it the people behind the systems or is it the social system that allows it to happen in the first place?

So it remains exciting to see how the debate about artificial intelligence and its impact on our society will develop. But one thing is certain: we need to be proactive and take responsibility for the transparent and responsible use of AI systems.

The blog series will continue soon, and in future posts we will look at issues such as “authenticity and originality” and “ownership of nature”. In the meantime, here are some links and resources where you can find more information on this exciting topic.