It’s a seemingly simple decision—you either permit an iris scan to be performed on you, and accept all the data security risks that entails; or you don’t, and thereby lose your claim to aid and refugee status. That’s the bitter reality in many refugee camps run by the United Nations High Commission for Refugees (UNHCR). And tThis is how new technologies are being tested in the Global South until they’re considered safe and thus marketable in the Western world.

Ariana Dongus has investigated this digitization strategy in use at UNHCR camps in conjunction with her research project at the KIM/Center for Critical Studies in Machine Intelligence. She and Christina zur Nedden also published an article on this subject for the German news weekly Die Zeit (“Getestet an Millionen Unfreiwilligen” [Tested on millions of involuntary subjects] here).

At the Ars Electronica Festival’s Theme Symposium on September 7th, she’ll elaborate on how the deployment of biometrics constitutes a continuation of colonial structures, and the extent to which refugees are performing a new kind of immaterial labor. And on Saturday, September 8th, Ariana Dongus will conduct an Expert Tour entitled “ERROR – Who decides what the norm is?” She gave us a preview in this interview.

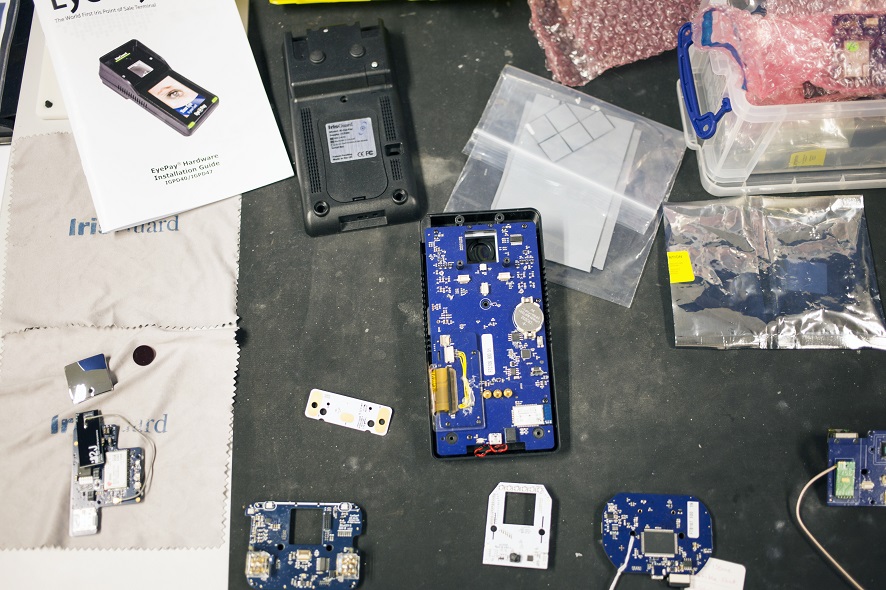

Protoypes of EyePay. Credit: Ariana Dongus

The title of your remarks in the “Fakes, Responsibilities and Strategies” block of the Theme Symposium is “The Camp as Labo(u)ratory.” What’s the meaning behind the wordplay?

Ariana Dongus: I’m talking about the use of biometrics in UNHCR refugee camps. Biometric scanning of refugees has become a central element of the digitization strategy in use at the UNHCR camps worldwide. My presentation at Ars Electronica is part of my research project at KIM/Center for Critical Studies in Machine Intelligence, in which I discuss two main issues.

One thread illustrates the hypothesis that these camps are sites of labs in which this technology is being tested on human beings who really have no choice in the matter, and the technology is thereby prepared to be launched. So, under what conditions do the men and women in these camps become a mass of mere test subjects, and which colonial continuities are made visible here?

The second strand scrutinizes the political economy of the camps. To what extent are these biometrically registered camp residents thereby performing a new kind of immaterial labor and what makes these data valuable? In my opinion, they’re part of the value-added chain of new markets that have discovered how to exploit minorities and the world’s poor. The advocates of these activities refer to this as “Banking the Unbanked,” “Banking the Poor” or the 4th Industrial Revolution.

Chief Operating Officer Joe O’Caroll of IrisGuard shows the inner workings of EyePay. Credit: Ariana Dongus

Ever since the end of the Cold War and increasingly in response to the many political, ecological and economic crises and wars of the present day, the UNHCR has built camps worldwide to house refugees. Allegorically, the refugee camp can also be seen as a product of global deregulation in the 21st century. The UNHCR is an institution that acts locally, nationally and internationally. It makes life-and-death decisions affecting millions of people and forces some of them to enter a camp, as Michel Agier’s studies have shown. Here, these people’s so-called encampment—their “storage” as the German term Lager figuratively suggests—becomes the manifestation of a permanent state as state of emergency. A significant proportion of the world’s population now lives in refugee camps; many have been there for decades. The UNHCR’s 2016 estimate is that 65.6 million people had fled their homes, more refugees than ever before. How they live and are treated in those camps indicates how the UN organizes, administers and productively exploits unwanted persons. Furthermore, there’s an enormous power imbalance when you consider that the UNHCR has the biometric data of millions of people at its disposal.

The context of insecurity, state of emergency and urgency makes any and all actions taken by the UNHCR by definition an experimental undertaking. Nevertheless, the use of experimental procedures and technologies like biometrics in this insecure context is, for that very reason, highly questionable. Technological experiments are being performed and legitimated with reference to this emergency which necessitates that “something must be done urgently.” In the global North, new technologies are tested under legally mandated conditions before they’re ready to be marketed. But in this case, refugee camps are serving as laboratories and the refugees are live test subjects.

Imad Malhas presents an Iris Scan device for the desert (Eyehood). Credit: Ariana Dongus

You and Christina zur Nedden jointly reported on camps in Jordan in which refugees are registered with iris scans whether they wanted this or not. What’s the purpose of an iris scan, and what happens to someone who refuses to undergo it?

Ariana Dongus: Biometric scanning is performed on all refugees when they first enter the camp. Without the eye scan, they aren’t granted refugee status and receive no aid. So refusal isn’t an option.

The justifications include faster and more efficient organization and preventing double-registration. The technology is said to be doppelganger-safe and, at the same time, means, in my opinion, a paradigm shift in the image of refugees—people who flee to a UNHCR camp are now under blanket suspicion of being con-artists and not first and foremost people who seek help and should receive it. They receive help only once they’ve gotten registered.

Workstation. Credit: Ariana Dongus

Your Symposium block has to do with, among other things, responsibility. What’s your assessment of the situation in the Jordanian camp? What responsibility does the UNHCR assume by bringing in IrisGuard, and, conversely, what responsibility is the UNHCR evading thereby?

Ariana Dongus: IrisGuard is a company that offers end-to-end solutions, which means they provide the hardware, the software and a cloud platform to which the data are saved. The data are also saved to a database in Geneva, Switzerland, but they’re simultaneously available worldwide on the cloud. According to IrisGuard, no one besides UNHCR has access to the data. Nevertheless, there are many open questions—for instance, what’s being done with the data by the camp’s supermarkets, which, of course, register who purchases what, when, etc.

So, according to IrisGuard, the UNHCR bears full responsibility. And this isn’t a bad idea from a legal perspective, since the UNHCR can’t be held legally liable for, say, a proven instance of misuse of the data, leaks or inadequately secured access to its computers. I don’t know whether you can say that both partners are trying to shift responsibility to each other, but what’s very clear indeed to me is that, in this workflow of biometrically capturing millions of people, a lot of trust is being placed in the technology as a neutral, value-free tool and, thus, maybe it’s assumed a bit too much responsibility.

Generally speaking, the UNHCR increasingly works on the operational level in public-private partnerships, and a large proportion of the aid-delivery process is outsourced to the private sector. Most of them are commercial firms with business models based on Big Data. The administrators of humanitarian aid organizations as well now seem to believe that data gathered on a massive scale reveal patterns of social physics. This occurs amidst a setting in which the UNHCR is fostering as its basic principle a climate like that prevailing among US startup enterprises.

Prototypes. Credit: Ariana Dongus

I would, therefore, describe as a colonial strategy the fact that “fail fast, often and early,” a Silicon Valley slogan, has become the guiding principle that UNHCR front-line managers apply to those partnerships and transplant as a faith-based mantra to the totally foreign soil of a refugee camp. In particular, fostering purportedly neutral, apolitical technologies meant to serve as a substitute for political action manifests an aggressive technological utopianism that shifts responsibility for this acute emergency to the refugees themselves.

In my opinion, this goes on to create a precarious situation—the amelioration of which is actually the UNHCR’s mission—and institutes a regime of mistrust at the expense of the refugees. Thus, since 2016 at the very latest, biometric registration of all refugees has been a part of the UNHCR’s basic strategy to establish identity management and the institutionalization of cash-based interventions. It used to be that food rations were distributed; today, increasingly, cash is disbursed or there’s a system of cashless payment, meaning that the refugees check out at the camp’s own supermarket with a wink of the eye!

Credit: Ariana Dongus

What are the risks of such biometric recognition systems, and what are the benefits?

Ariana Dongus: I fear that we actually still don’t know for sure what could happen with the data, precisely because this hasn’t been going on so long. When they’re registered, refugees have to sign a waiver, which we asked to examine but the UNHCR refused our request. I mean, it’s quite doubtful that these people really know what’s being done with their data.

In Jordan, for example, the UNHCR shares its iris scans with the Cairo Amman Bank. From here, the transactions are coordinated; some of them are done using crypto-currencies like Ethereum. It’s not clear if the people even know this, and it’s even less clear whether—and, if so, to what extent—this bank shares the data with third parties. Under these conditions, I find it very problematic that no alternative to scanning is offered. With whom are these data being shared? For example, over 68,000 Rohingyas, a Muslim minority persecuted in Myanmar, fled from this terror and violence over the border into Bangladesh. Their fingers, eyes and faces were biometrically registered, and the UNHCR shared these data with the government of Bangladesh. This past spring, Bangladesh entered into negotiations with the government of Myanmar to send these people back—along with their data. Now, consider what this means to an ethnic minority persecuted in their homeland on account of their identity. These databases can have life-threatening consequences if they fall into the wrong hands! This is a huge dilemma.

Another danger is something that’s structurally built into this technology—the tendency to discriminate. This technology functions via automatically created templates—a photograph is taken of a person’s eye or face, and an algorithm converts it into a barcode. This is a standardized process that researcher Jospeh Pugliese refers to as “structural whiteness.” And of course, it’s clear that all eyes and faces that don’t conform to the norm are often either not recognized or misidentified, which can be a source of errors.

Imad Malhas in front of packages with EyePay. Credit: Ariana Dongus

I think that dealing responsibly with such data must always mean giving highest priority to the rights and security of human beings. Coming up with approaches to really putting people and their needs on the front burner is the pressing matter now being discussed at many NGOs.

Christina zur Nedden and I interviewed Zara Rahman of the NGO The Engine Room in conjunction with our research. They critically and attentively follow the UNHCR’s work, and focus their efforts on showing how activists and other organizations can help deal responsibly with data and use them as agents of social change or changes.

In general, it’s evident that biometric applications constitute a rapidly growing market. And they’re not only being deployed in refugee camps—the iPhone X with built-in facial recognition software is a popular example. But it’s also clear that these biometric applications can only then be considered safe for the Western market once they’ve been tested in the global South, as sociologist Katja Lindskov Jacobsen presented in detail in her study.

Naturally, there also has to be acceptance for this technology in Western markets, that’s clear. But there’s also—and this is a historical constant that goes back long before colonialism—a hidden history of experimentation whereby technologies are tested on the bodies of minorities in unsafe territories in order to be made safe for citizens of Western regions. Here as well, we can pose the question of what sort of work this actually is…

I think that this can be called a new globally effective norm, which takes it completely for granted that identity-making necessarily entails registering biological traits and measuring the human body to generate biometric and DNA data. These digital identities are stored in databases, which are error-prone, highly problematic and very valuable. Author/filmmaker Hito Steyerl expressed this succinctly some time ago: “identity is the name of the battlefield over your code—be it genetic, informational, pictorial.”

Ariana Dongus is a writer working with (moving) images based in Berlin. She is currently following her PhD at the Hochschule für Gestaltung in Karlsruhe with the center for Critical Studies in Machine Intelligence.

Ariana Dongus will speak at the “Fakes, Responsibilities and Strategies” block of the Theme Conference on Friday, September 7, 2018 at 14:00 in the Conference Hall at POSTCITY Linz. The entire conference program is available here. She will also lead an Expert Tour entitled “ERROR – Who decides what the norm is?” on Saturday, September 8th from 12:00 to 13:30. Learn more here.

To learn more about Ars Electronica, follow us on Facebook, Twitter, Instagram et al., subscribe to our newsletter, and check us out online at https://ars.electronica.art/news/en/.