IRCAM, the world’s largest research center dedicated to both musical expression and scientific research, is a partner of the Ars Electronica Festival 2019. The institution is also involved in the STARTS Initiative as coordinator of the STARTS Residencies and will present these activities at the STARTS Day. Hugues Vinet, head of research activities at IRCAM, told us in an interview how the institute works, why AI has social relevance and what role he and his team will play at the festival.

IRCAM is the world’s largest public research center dedicated to both musical expression and scientific research. Can you tell us a little bit about your work and research, your institution?

Hugues Vinet: My background is signal processing. I worked from the mid 1980s at the Musical Research Group (GRM) in Paris on the first real-time audio workstations and I designed the early versions of the GRM Tools product which made creative audio processing tools broadly available on personal computers. I was then called in 1994 to manage IRCAM’s R&D activities.

IRCAM was founded by Pierre Boulez in 1977 as part of the Centre Pompidou project. At the end of the 1970s, Boulez had a vision that the renewal of contemporary music would be achieved through science and technology : it drove the foundation of IRCAM as a pioneering art-science institution gathering scientists, engineers and musicians for their mutual benefit. As we celebrate the 40th birthday of Ars Electronica, the proximity of the foundation dates of both institutions is amazing…

IRCAM has developed a lot since then. From 1995, together with composer and musicologist Hugues Dufourt, I co-funded a joint lab hosted at IRCAM with some of the main academic institutions in France – CNRS then Sorbonne Université, currently named STMS for Science and Technology of Music and Sound. 150 researchers and engineers are involved in a broad spectrum of scientific disciplines – signal processing, computer science, acoustics, human perception and cognition, musicology… The main goal is to support contemporary artistic production with new technology – 30 works, mostly in performing arts, are created every year in collaboration with some of the greatest composers and performers and produced in various venues including at IRCAM’s ManiFeste annual festival in June.

How is your research connected with AI, which role does Artificial intelligence play?

Hugues Vinet: Research in AI started at IRCAM in the mid-80s, with the development of rule-based systems for sound synthesis (Formes) and of LISP-based computer-aided composition environments (PatchWork, then OpenMusic). Relying on symbolic modeling of musical structures, they have provided several generations of composers and musicologists with high-level and visual programming paradigms for algorithmic generation and analysis of musical materials. Recent research includes computer-aided orchestration based on instrument sample databases.

Another important research topic in AI has been Music Information Retrieval, aiming at extracting relevant metadata from audio samples and music recordings. IRCAM coordinated the first related EU projects and developed leading MIR research and technology through various collaborations with industrial actors.

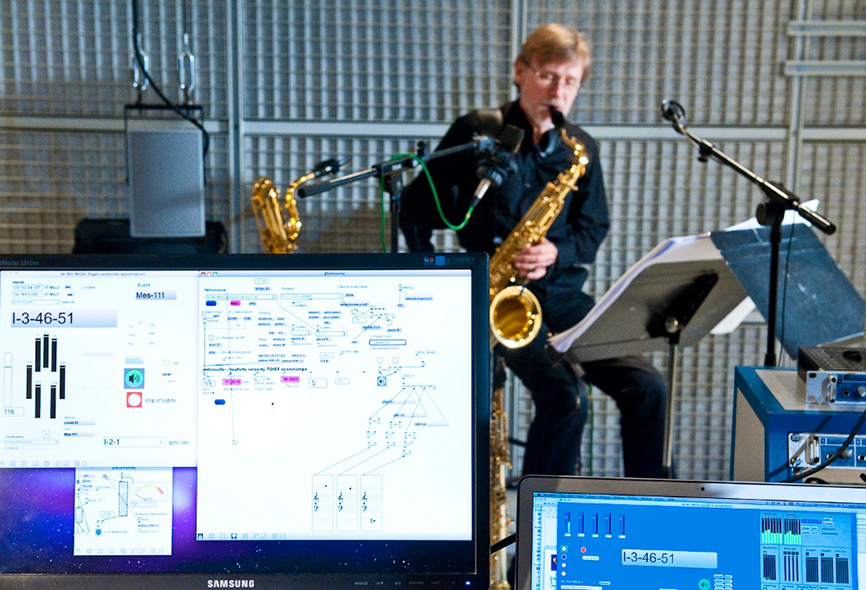

In parallel, machine-learning techniques were applied to the use of computers in live situations: real-time computer/ performer synchronization for mixed pieces (score following), gesture recognition for the design of new instruments and for interactive dance. As a step further, systems have been developed for human/computer co-improvisation and co-creativity (OMax and DYCI2).

For several years, the availability of deep learning outperforming classical modeling techniques has led to a major reorientation of research, in particular in audio analysis and synthesis. Deep learning also potentially brings artists radically new ways of representation, manipulation and generation of musical material, that are currently been explored in various directions.

Is AI and Music a topic that concerns only science, or will it affect society, not only as an audience, as well?

Hugues Vinet: Indeed. The notion of audience is evolving and technologies for massive multi-user participatory musical situations based on smartphones are already there. Since AI can capture complex implicit knowledge, barriers between listening and producing high-quality music will tend to vanish for non-musicians, bringing user generated content up to a new level.

IRCAM plays an important role in the AIxMusic Festival. What is your interest in collaborating with a festival like this?

Hugues Vinet: This festival provides a new opportunity in Europe to meet major actors of the field, including researchers, artists, companies and policy-makers and we are very interested to participate. More globally, the Ars Electronica festival is a fantastic event and attending it is always stimulating.

Can you please explain the different formats in which you are participating?

Hugues Vinet: : 7 persons in relation to IRCAM are involved in the various events including a workshop on Friday, the improvised performance C’est pour ça on Saturday afternoon, a series of conferences on Sunday morning at the AIxMusic Day , and demos in the exhibition space.

Hugues Vinet is Director of Innovation and Research Means of IRCAM and has managed all research, development and tech transfer activities at IRCAM since 1994 (150 persons). He has directed many collaborative R&D projects and is currently Coordinator of the STARTS Residencies program. He also curates the Vertigo Forum art-science yearly symposium at Centre Pompidou. He participates in various bodies of experts in the fields of audio, music, multimedia, information technology and technological innovation.

IRCAM is part of the AIxMusic Festival, which takes place as part of the Ars Electronica Festival from September 5 to 9. Consult our website for details.

To learn more about Ars Electronica, follow us on Facebook, Twitter, Instagram et al., subscribe to our newsletter, and check us out online at https://ars.electronica.art/news/en/.