In this interview, Gerfried Stocker presents the theme and content of the 2013 Ars Electronica Festival.

What’s the title of this year’s festival and what’s it all about?

The theme of this year’s festival is TOTAL RECALL – The Evolution of Memory. The subject is memory—how memory is formed, preserved and passed on, as well as how it’s lost. We’ll be looking at three areas—nature, culture and technology—and also considering future prospects.

We’ll take a look at how memory originates in nature, whereby the emphasis will be on human beings because that’s most relevant for us. We’ll examine how culture and technology process memory. Under the heading of Global Memory, we’ll confront issues having to do with Big Data and the Global Brain, the internet. Future Memory frames a consideration of what’s emerging on the horizon, and an encounter with how society’s dealings with memory will change in the future.

One particular challenge is posed by the terms remembering and memory, which sound a bit like what you learn in history class in school. The term memory in the modern sense, of course, is more closely associated with storing data.

The pyramids. Built for eternity to present the power of the Pharaons. Everyone is afraid of time, but time is afraid of the pyramids. Photocredit: J. Griffin Stewart

Human Memory, what will we be getting into here?

Human Memory means an encounter with the neurosciences, one in which we’ll be dealing with both philosophical as well as scientific questions, matters like how memory functions and forms, and what is it exactly that memory evokes in the human brain. What effect does remembrance have on us—I mean, after all, memory is one of the basic components of the human personality, identity, consciousness. Memory constitutes one of the most basic building blocks of human existence. Without memory, there can be no consciousness, no identity.

This is precisely why it’s so fascinating to have the neurosciences playing a corresponding role at the festival. Nevertheless, we won’t be going into concrete research results; rather, we’ll be presenting and scrutinizing the methods being used by those performing research on the brain. We’ll be hearing not only from experts in the field of memory, but also from researchers working in many of the various other areas that are now the key determinants of how we think about our world. This is where genetic engineering has played the leading role over the last 20 years, but where neuroscience has now assumed the dominant position as the natural science that has come to the fore to characterize, manifest and change our image of what a human being is.

What about Global Memory?

The second area, Global Memory, will deal with how we as a cultural arbiter work with memory and data storage. Beginning, of course, with the question of why we want to preserve all this stuff, why we go to all the trouble of excavating and analyzing some clay potsherds. This will offer an opportunity to segue into philosophy and to the question of why memory is so important for us. This brings us back to consciousness and identity. Why do we invest all this technical effort? Why are we incessantly developing technologies with which we can store more and more information?

The datacenter of CERN, state of the art of dataprocessing.

This, in turn, leads to an analysis of our current situation, the widespread impression of living in an age in which people are completely deluged by data. But this is an experience that has often recurred down through humankind’s cultural history. Every time new technologies emerge, people have had the impression that so much information is being produced and stored that nobody is able to process it anymore. For instance, when Leibnitz was commissioned to set up a library and, in going about it, developed the decimal classification system. His mission was to use the means of information processing to create order, but the ultimate upshot was that people came to believe that they were no longer able to grasp so much information.

The same holds true for the development of movable type and the invention of the mechanical printing press, which meant you no longer had to print books and newspapers manually, and they could be produced in enormous quantities. And here as well, some people—laymen and academicians alike—reacted with moaning and groaning about being flooded with information.

It was the same with radio and TV, of course, and now more than ever with the computer. Nowadays, we have the feeling that all the information floating around on the internet amounts to an incomprehensibly large quantity, whereby only 32% or 33% of the people even have access to the internet. So if we think that it’s already gotten to be too much, then maybe we should cut the sniveling and consider what it’s going to be like when half or two-third of humankind can get online. Then, storage capacity will have to quickly multiply once again.

And, of course, we’re faced yet again by an unanswerable question: Why do we even do all of this? Why do we store all of this to memory when we’ve long since realized that we can’t deal with it, that it’s gotten to be much too much?

Although floppy disks are hardly ever used today, they are still the symbol for saving. Credit: KDE

So by beginning at this year’s festival with a consideration of how data storage technologies function, we’ll be in a position to make a smooth transition to the question of what really happens when, for example, you’re entering a term into the Google “Search” field and your answer appears before you’re even done typing your query. I hope we succeed to some extent in accurately representing this gigantic technological Babylon. After all, it’s actually hardly comparable with anything else—from the sheer magnitude; from the speed; which algorithms have to be programmed there; the data centers that constantly have to be aligned; and, of course, also the question of the extent to which the whole thing can be manipulated.

The internet is something that can give us access to humankind’s entire store of knowledge. But the more it’s able to do this, the more it’s compromised by commercial interests and business models. Everyone who works at two locations is familiar with this problem: the search results you get on the go with your iPad differ from those you get in the office with your desktop. Data at one location differ from those at another.

And the knowledge available on the internet is by no means accessible by everyone. There are plenty of networks that are sealed off from the outside world and can be accessed only by certain individuals or those who pay for the privilege. The festival will deal with this as well, with universal access to the global memory. After all, what good does internet access do me if, for example, I’m a student in Lagos and I can’t get access to knowledge from universities in the United States?

We live in the time zone with the second largest number of internet users, one in which Germany and France are, of course, very large but Lagos has the biggest influence, and there it’s mainly in the form of smartphones. So, there is internet access, but this simple statement gives rise to a highly idealized picture in which pupils are able to work with the internet in schools. This is, needless to say, far removed from reality. There is actually only very limited access, which is highly distorted by the statistics.

Future Memory is the third major thematic cluster …

Future Memory will elaborate on how humankind will deal with this issue in the future. Here, we’ll go into data centers, a topic that provides beautiful examples of how memory storage looked 20 years ago, what it looks like now, and how it will look in the future.

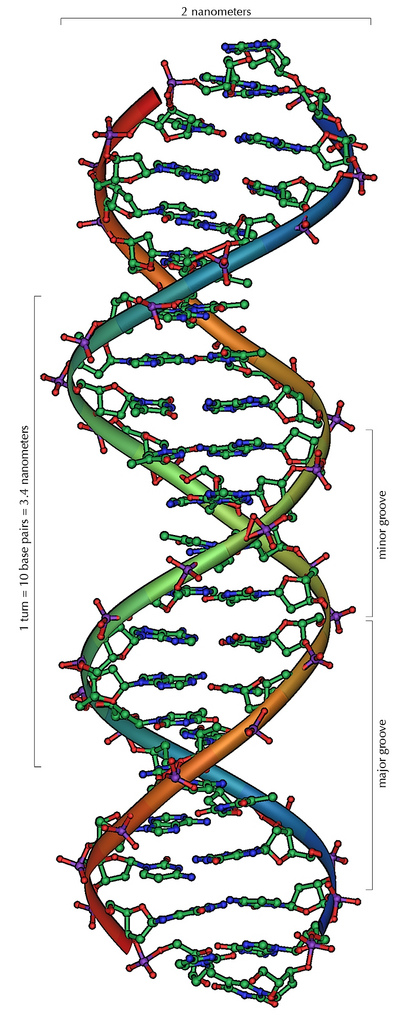

For me personally, one of the most exciting developments in this area is storing data on DNA. Here, our friend George Church has also played a key role. A team of researchers successfully encoded a video on DNA and sent it from the USA to Europe, and the reportage about this feat circulated a number that fascinated me. According to this article, the entire data volume of CERN, which is, you must admit, an extremely large quantity—the local data center as well as the worldwide network—could be stored on 40 mg or 40 g of DNA.

DNA, the future of memory? Credit: Michael Ströck

To accomplish this, the data is converted into ACTGs, which is basically quite easy. Scientists have long known how to do this. The hard part, just like saving information to other data storage media, is the decoding process. If you merely deposit the data, you can’t decode it anymore, which means that you need a complex structure with error correction mechanisms so that you can access the data in a structured way. In any case, what comes out in the end is a series of ACTGs that you can synthesize, a white powder that’s, so to speak, DNA. Naturally, you can also transfer it to living creatures, to bacteria, from which it’s for the most part simply excreted. But, of course, you can also set this up in a way that the DNA combines with the living creature.

In 1999 or 2000, we had this project by Eduardo Kac. He took the passage in Genesis in which God commanded human beings to subdue the Earth, and Kac transformed it into a DNA sequence. He had the DNA synthesized and then contaminated it, so to speak, with E. coli bacteria. Back then, it was simple for him to travel from the USA to Europe; today, it would be much more difficult to make the trip with this stuff.

In any case, the challenge is to save the data to memory in such a way that they can be re-accessed in error-free form—and this, of course, is no trivial matter. But engineers are already succeeding in doing this, prototypes presently exist. Now, you can compare this to the achievements of 1957, 1958 when the first transistors were built. I mean, these were not yet components that could have been used to construct a computer, but this was a breakthrough that led to what we have today—processors and other devices that assemble millions of transistors on a few square millimeters. That was 50 years ago. There’s a relatively high probability that, 50 years from now, we actually will be able to store the entire quantity of data generated by CERN on 40 grams of powder.

The problem will be that, over these next 50 years, the volume of data will increase to such an extent that we’ll probably once again need something new and be faced with the same problem we’re confronted by now. The more capacity you have the more data you get; and the more data you have the more capacity you need! There’s no end to this. And the impression we have that the end is somewhere up ahead—well, keep in mind that people have often had this impression over the course of history.

This reminds me of the frequently cited example of how some people used to say that travelers shouldn’t exceed the speed of 30 km/h because the human brain couldn’t handle it. And now we have a fellow jumping out of a balloon and breaking the sound barrier.

It’s a similar story with human cognitive capabilities. After all, we’re not really smarter than we were 4,000 years ago, or more intelligent than the ancient Greeks. Nevertheless, we’ve learned to deal with things, to ignore things. Perhaps storing cultural techniques to memory has enabled us to clear up quite a bit. Backup is deletion for pussies; if you don’t have the intestinal fortitude to take leave of something, then just save it and forget it.

In any case, the festival will shed light on both the hardware and the software levels. What will the data centers of the future look like, and the algorithms designed to distribute content and make it globally accessible. And we’ll come full circle by considering the question of whether we’ve reached the point of being able to synthetically model the human brain and thus approach the exact point at which memory originates. Projects such as Blue Brain in Switzerland and Synapse at IBM that have already made considerable progress in simulating the complexity of the neuronal networks of simple brains make it appear likely that it’s only a question of time until the computational structures are available to create silicon models of these networked processes of the brain.

At this point we’ll have reached the realm of fiction and philosophy, and we can ask what really happens at this level of complexity. Will this give rise to something like auto-emergent intelligence?

These are, for the most part, not new issues. But when we succeed in coming full circle, then Ars Electronica will once again be in a position to host a holistic encounter with this topic on the basis of art, technology and society. We’re not staging a scholarly conference on the neurosciences; instead, what we aim to do is to span a complete arc. Fundamentally, this theme is totally relevant as a current development and as a confrontation with a pressing issue, which is why we want to consider it in a larger context.

TOTAL RECALL – The Evolution of Memory will run September 5-9, 2013 in Linz. Details about the program, artists and speakers are online at ars.electronica.art/totalrecall.