The rise and fall of the sea level, the water temperature, the humidity, the gravitational attraction of the Moon and the position of the human being observing these phenomena—maotik has employed all of these real-time data to create a visualization that premiered at the 2016 Ars Electronica Festival and is now running in Deep Space 8K at the Ars Electronica Center. In this interview, he tells how he came up with this idea and finally

Your work is all about visualising nature – and daydreams. Could you tell us a little bit about the beginning of this project?

Maotik: I am interested in developing intuitive tools and interfaces that give me full control of different media, it gives me the ability to explore new form of experiences and languages. I have always been more interested in event-based visuals rather than pre-render videos. Even if we still don’t reach the same graphics rendering quality with generative visuals it is compensated by the potential of iteration and the ability to improvise. In that way each representation can lead to a creation opened to a multitude of perceptions.

The idea is to give enough intelligence and randomness to the system so the visuals becomes organic and unpredictable. The last few years I have been mainly focusing on experimenting with sound and visuals. This project was an opportunity to expand my field of research on adding real time data to transform nature data into a poetic interpretation. Mirroring the relationship between nature and art to reconsiders the implications of human presence in the natural world.

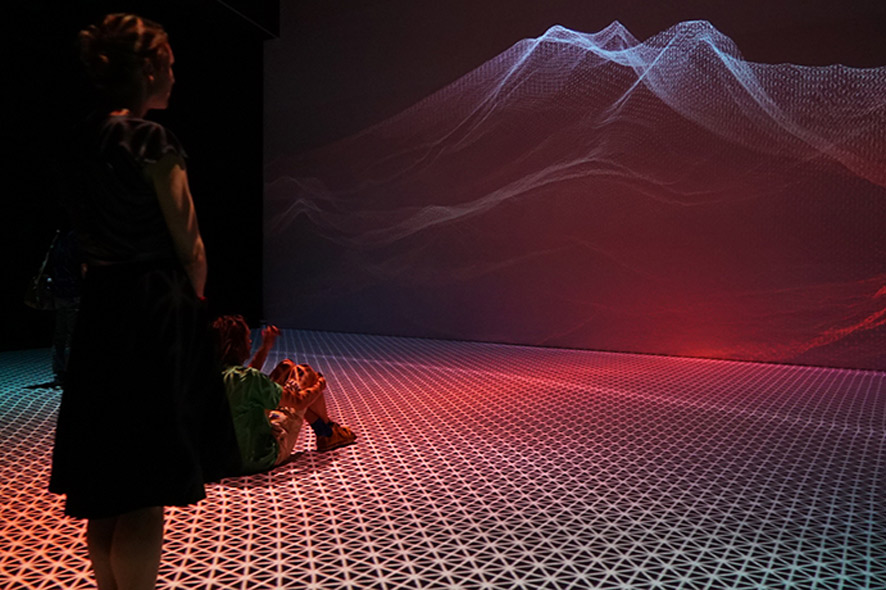

This project idea came in my mind after visiting the Deep Space 8k, this walking space and the immersive screen gave me the idea the idea of working with the horizon. Ars Electronica gave me the opportunity to spend 3 days to test my content then I develop the project I presented last September.

Credit: maotik

What kind of data do you use in your installation, how do the numbers interact and why did you choose these units?

Maotik: I grew up by the Atlantic Ocean and I have always been under the charm of the horizon scenery and especially the fact that it changes visually according to parameters such as sea levels, wind or weather cast. You can go the coast every day and you will always see something different. I thought that was really something interesting to explore using generative system. My idea was to represent these morphing landscapes into an immersive multimedia experience.

Ocean can be a relaxing and a playful place but at the same time it can be dangerous and can also be really imposing to human, warning us how small we are compared to nature and why we have to respect it. I started to develop my ideas with a programmer I met in Boulder, Colorado in May 2016, Mickael Skirpan a PhD student who already worked with data on various kind of projects. He helped me to retrieve the parameters I found interesting. Unlike sound data, real time information retrieved from a database is not as easy to visualise. When I work with a sound composer I can recognise the data with my hear sense and understand the gesture. It is more challenging with data you retrieve from a database as they can’t be feel with any sense.

I used 11 parameters to define the ocean form, we connect ourselves to a database and retrieve data such as sea levels, tide coefficient, humidity, weather cast, wind force, wind direction, weather cast, moon cycle, location, time of the day. When parameters such as wind force or sea levels will affect the movement of the sea others such as weather cast or humidity will change the colors. The difficult part was create algorithms that would always keep an interesting aesthetic in terms of visual and sound.

Credit: maotik

What program language did you use and what were the challenges for you to design this project for the Deep Space 8K?

Maotik: This project was developed using the software TouchDesigner – it is a visual development platform that I have been using the last four years to produce different projects from full dome immersive AV performance to interactive installation, TouchDesigner gives you the tools to create advanced real-time graphics, it is a graphic programming language, but enable also to write scripts in Python and GLSL.

The challenge to create content for an immersive environment is to design a visual composition that can change the perspective of the space. Inside a dome you can easily change the size of the room, as any position in the space does not really matter. In the deep space it is different, it is more like video mapping the viewpoint is important to make trompe l’oeil effect. The deep scape has a walking platform and also a level where the audience can view the content from upstairs. So I had to consider about making a visual composition that could be interesting from any point of view.

The other thing was to find a connection between the front and the floor projection. As the content is generated from two different computers, I had to find a way to make two environment visually coherent that could somehow interact to each other.

Credit: maotik

How did you feel when you were moving through your own visuals here at the Ars Electronica Center for the first time?

Maotik: Through this installation I wanted to communicate this excitement of living something that is happening here and now. I think in general, simple interaction works the most efficiently, while walking by the platform the user can generate waves, observing moving water always gives an appeasement feeling. The immersive projection and the surround sound system helps simulating the sensation of walking by the ocean, this monumental massive shape that is constantly changing form.

I am always interested to observe how people experience a multimedia environment, in flow, some people would just move slowly, other will challenge the floor interactivity and would start running and jumping to generate waves or others that would just sit down and meditate on the changing visual scenery.