From October to December 2013, media artist and electronic musician Ignacio Cuevas Puyol, aka White Sample, stayed as artist in residence at the Ars Electronica Futurelab. During his residency, Ignacio designed a synthesizer module: The DLN, or Digital Logic Noise Generator. The aim of the Eurorack Modular Synth Module Project was to implement the process of “C one liners” applied to audio into the analog world by building a programmable module which can be controlled by other modules through voltage controlled gates. At the end of his residency, Ignacio demonstrated the Digital Logic Noise Generator together with a few members of the Ars Electronica Futurelab in a live performance at the Deep Space.

To follow up the success of the first performance Ignacio again teamed up with raum.null, consisting of the Futurelab’s Chris Bruckmayr and Serbian sound artist and researcher Dobrivoje Milijanovic, for a live performance at the Ars Electronica Festival 2014. Along with the Digital Logic Noise Generator, other electronic devices, such as a modular synthesizer and analog noise boxes, were thrown into the mix. For the live visuals, the sound artists were supported by Futurelab members Veronika Pauser (VeroVisual) and Peter Holzkorn (voidsignal), as well as Michael Platz, who contributed a body controller he developed, to be used by Veronika as another way to influence the visuals in real-time.

[youtube=http://www.youtube.com/watch?v=c4YWwhSXlog&w=610]

The extraordinary and daring performance using new technologies on the Friday night of the Ars Electronica Festival was an overall success, with twice as many people attending as the team had expected, and positive feedback from the audience. In the following interview, the contributors give you some insights into the idea and the technical background of the performance.

Ignacio, it was great to have you back in Linz. How was it to come back and to work on a new performance with a few members of the Ars Electronica Futurelab?

Ignacio Cuevas Puyol: Everything turned out really good; it was wonderful to be a part of the festival. I am really happy with the result after working with Chris, Veronika, Peter and Michael from the Futurelab and Dobrivoje taking care of the sound engineering. The whole experience was very positive, and we were very glad to hear good comments from the audience.

Could you give us some background to the concept of the performance and how it developed?

Ignacio Cuevas Puyol: We named this performance QUADRATURE because we started with the concept of four elements mixed together: two sound sources (raum.null and White Sample) and two video sources (VeroVisual and voidsignal).

Impression Quadrature (Still from the video footage by Michael Mayr)

The ambience of the performance was based in kind of a ritual look/feeling, playing on the floor almost crawling over cables and audio devices and the big projection behind us controlled by Veronika and Peter with images from nature in black and white overlayed with generative geometries and parametric effects. There is this dark and occult vibe going on and light emerging from the audio frequencies going to the video side controlling some parameters so everything was like a big wave of darkness and light dancing with this cloud of noise moving and transforming in time.

We came to this idea through emails, talking about the concept and the techniques involved. I have to say Veronika and Peter did a great job because the result was even better than what I had in mind. With Chris we have a strong connection through analog noise so I was pretty confident about his part.

So in terms of structure during the performance we had no limitations about intro/dev/outro because this worked as a natural system, I like to think of it as in terms of intensity, something like the weather, changing naturally in its own way, going from sunny to rainy and everything in between.

Impression Quadrature (Still from the video footage by Michael Mayr)

Would you give us a short overview of the technical setup that you used for realizing this idea?

Ignacio Cuevas Puyol: I was playing my modular synthesizer with around 30 different modules. One of those was the Digital Logic Noise Generator I build last year during my residency at the Ars Electronica Futurelab. This little module was the seed of the first performance and later this one during the festival.

[youtube=http://www.youtube.com/watch?v=bGfqWjRNL38&w=610]

Now let’s talk about the sound. Ignacio, Chris and Dobri, the sound was your part. How did you use the Digital Logic Noise Generator and how did your ideas and work develop since the performance last year?

Ignacio Cuevas Puyol: The DLN produces sound and it is controlled by other modules like sequencers, gate generators, LFO, etc it has four separate channels plus a sum so every one of these channels were going into other modules so to have a complex synthesis. Along with this I had other modules producing sounds (oscillators) controlled by the same sequencers, dividers, logic operators, etc that were controlling the DLN.

After playing with Chris last year I was really excited about the possibilities when mixing our sound, during my residency I was using the Soundlab in the Ars Electronica a lot and jamming with Chris in there was always a very nice thing, we were both very happy about the results.

During the performance we had Dobri in the middle doing the mixing from my setup and Chris’s Setup, controlling levels and sampling some parts from both of us, creating a third layer of noisy loops. This was a brilliant idea in my opinion.

raum.null: The general concept behind the organic soundscape is the sonic intermingling between the more electronic sounds of the modular synthesizers and the industrial sounds of a network of analog experimental sound generators fed through a looping system handled by Dobri. This gives us a multilayered sound environment that’s constantly evolving during the performance. The sonic landscape is reflected in the visuals: organic, biological structures and clean geometry, life on this planet and the hidden mathematical forces behind it.

Impression sound artists and setup (Still from the video footage by Michael Mayr)

Veronika and Peter, you were responsible for the visuals. You used some special gimmicks in the live performance, like a body controller to influence the visuals in real time, and the programming of the live renderings was another extraordinary challenge. Would you give us some glimpses behind the scene?

Veronika Pauser: Ignacio approached us with some clear ideas about the visuals accompanying his sounds. He sent some images to give an impression of the mood he wants to communicate. When I was discussing these aspects with Chris Bruckmayr we developed the idea of the “brutal nature which is reconquering urban territories” as the general concept for the visual output.

Resulting, nature was a big topic in the final visuals. Thus, we used elements such as clouds, forest, sea… Additionally, the video footage was overlaid with real-time processed content elements by Peter, which created a relatively surreal atmosphere.

We extended this combination of new technological developments with natural and traditional to the performance and technical setup: we used Electric Paint to create my facial painting reminding on Tā moko, the traditional facial tattoo of the Maori. This Electric Paint was connected to a body controller which Michael Platz developed for a raum.null performance earlier this year.

I’m very open towards such things, as I always try to integrate new technologies in my performances, like I did a lot with gaming interfaces such as the Nintendo Wii or the Microsoft Xbox Kinect, which enabled me to use body movements for controlling the visual output. In this performance I moved one step further by using my skin as actual controller.

How did you apply the Electric Paint to your face?

Veronika Pauser: Another colleague of mine, Michael Mayr, made a template illustration reminding of these facial Maori tattoos that I printed with a cutting plotter on adhesive foil. Hence we had the negatives of the template which we stuck in my face and colored in with Electric Paint in a one hour painting session.

Making of Electric Paint (Photo: Peter Freudling)

The Electric Paint on my face was then equipped with electrodes to connect it to the body controller, as well as the forefinger of my right hand. Thus, by touching a certain area in my face, an electronic circuit got closed and a midi-note was triggered, which I mapped on a certain effect in the software. As a result, I was able to control the visuals by touching my skin.

At a certain point in the performance my face was also shown in the visuals overlaid by Peter’s particles which amplified this surreal effect.

(GIF: Veronika Pauser)

In general, I got used to having this futuristic Cyborg-look. Before the performance we did a test run by paining a small floral pattern in my face. I let this on my face and on the street I had the impression that people where more open-minded when approaching me. They were very positive, fascinated, and smiling at me. Maybe they just thought I’m crazy, but I really enjoyed their positive attitude towards me.

Peter, what do you want to add about the visuals?

Peter Holzkorn: Conceptually, we both have our aesthetic interests, and this time Ignacio also gave us a general visual direction in which he wanted the visuals to go. Veronika and I reviewed our respective contributions together, agreed on a loose dramatic arc, and otherwise let the sound lead us during the performance.

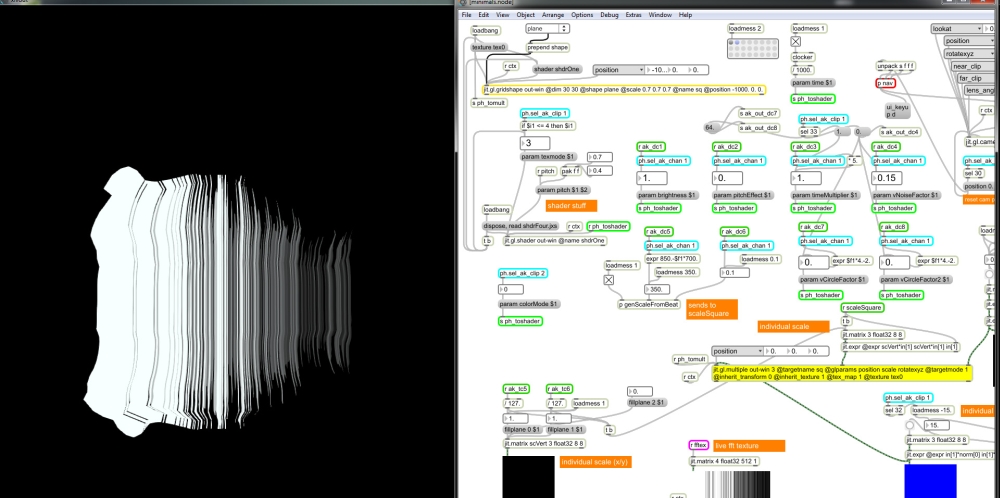

(Screenshot: Peter Holzkorn)

Like in last year’s performance, we used a two-stage setup: I had developed a couple of programs that were producing real-time generative graphics, and these were fed into a VJ-toolkit where Veronika was combining them with video material and effects. Both the generative programs and the VJ-software were responding to live sound analysis as well as haptic controls in the form of MIDI-controllers and the body controller. Using this setup, both of us had the freedom to control our respective components independently during the live performance, while the output, the sum of these parts, was more dynamic and interesting than an individual effort could have been.

Technically, there are two points I want to mention from my side: First, I want to thank Florian Berger (another member of the Ars Electronica Futurelab) for his support in creating one of the generative algorithms – it’s great to be surrounded by such talented and helpful colleagues. The other is that the setup in its current shape was made possible by Spout, a fantastic new library that allows inter-process GPU-based texture sharing on Windows, i.e. what Syphon does for OS X. This enabled us to choose the best tools (Max/MSP, Cinder, Resolume) for each part.

(Screenshot: Peter Holzkorn)

Michi, you were the one who developed the body controller. Could you please give us some insights into the device, what you are doing and where you want to take it?

Michael Platz: In spring 2014 Chris Bruckmayr came up with the idea to use BareConductive’s Electric Paint for one of his upcoming performances (Fuckhead/raum.null: “Who am I”) to trigger audio samples and loops by touching certain parts of his body where the Electric Paint was applied. The idea for the body controller was born.

As a first prototype, I developed a wearable MIDI input device using the Atmel ATMEGA32U4 microcontroller and the LUFA open-source USB stack. The BodyController can be worn during on-stage performances directly on the body without disturbing the artist.

When Veronika mentioned that she wants to use the body controller during the Quadrature performance to influence her visuals in real-time we tackled the remaining problems, came up with a sturdier version, and expanded the MIDI device up to eight MIDI channels.

The body controller is a generic USB MIDI device and is supported by nearly all popular operating systems (Windows, Linux, AppleOS,…). This fact enables numerous possibilities where I want to try using Electric Paint applied directly to the body of a performer in an artistic way for real-time interaction during different on-stage live performances.

Is there anything you want to add we did not talk about yet?

Ignacio Cuevas Puyol: I’d like to say working with members of the Ars Electronica Futurelab is always great. There is this mixture of technical knowledge and artistic knowledge which is amazing. I think everybody involved in this performance was very happy with the output. After talking to the others I know they were very satisfied just like me.

What are the next steps? And are there plans for further collaborative projects and performances?

Ignacio Cuevas Puyol: I would love to keep on collaborating with the Ars Electronica Futurelab, my connection with raum.null is stronger now and we have some ideas for the future.

We, the whole team, talked a little bit about taking this further but it is still just an idea so I won´t reveal anything for the moment.

I just have to say Thank You very much Ars Electronica.

Credits Quadrature

Sound: Ignacio Cuevas Puyol (White Sample), Chris Bruckmayr & Dobrivoje Milijanovic (raum.null)

Visuals: Veronika Pauser (VeroVisual) & Peter Holzkorn (voidsignal)

Body controller: Michael Platz

Special thanks to Michael Mayr (documentation), Florian Berger (code base) and Claudia Schnugg (moderation, organizational support)