Was können wir uns unter dem Konzept der künstlichen Intelligenz vorstellen und was wollen wir damit erreichen? Und was verstehen wir eigentlich von der menschlichen Intelligenz und wie kann man versuchen, ein Modell von ihr zu erstellen?

Die Frage nach der künstlichen Intelligenz (KI) eröffnet Raum für Spekulationen über die Zukunft, nicht zuletzt wegen einiger alter und neuer unbeantworteter Fragen, z.B. was Intelligenz bedeutet, was wir als künstlich bezeichnen, und die Unterscheidungen, die wir zwischen nicht und weniger künstlich machen. Sicher ist, dass trotz der vielfältigen und ungeklärten Bedeutungsebenen dieses Begriffs die Technologien bereits heute unseren Alltag, unsere globalen Vernetzungen, unsere Kultur und unsere Politik tiefgreifend verändern. Diese Entwicklung wirft natürlich Fragen zu alten Konzepten sowie neuen Inhalten auf, aber auch zu der Tatsache, wie unsere sozialen Gewohnheiten funktionieren und wie wir die neuen technologischen Mittel darauf abstimmen wollen.

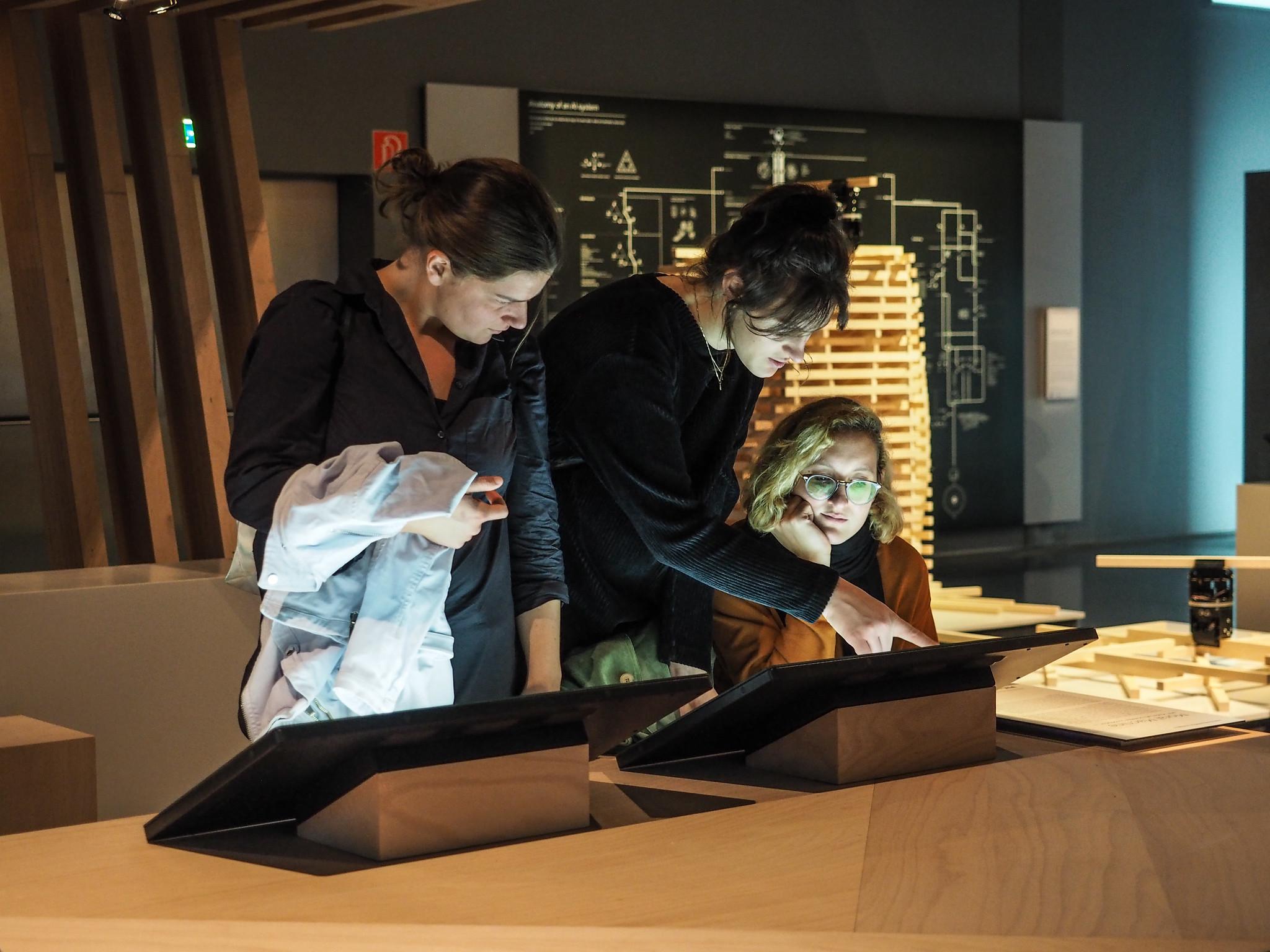

Das Ars Electronica Center widmet einen großen Teil seiner neuen Dauerausstellung diesen Technologien mit dem Ziel, eine Orientierungshilfe – sozusagen einen Kompass für alles Neue zu bieten. Unter dem Titel Understanding AI werden wichtige technische Aspekte und konkrete Anwendungsbeispiele in soziale, ökologische, politische und ethische Zusammenhänge gestellt, kritisch beleuchtet und kreativ erweitert. Die Ausstellung will nicht die allgemeingültige Antwort oder die Lösung für die Fragen finden, die die künstliche Intelligenz aufwirft. Vielmehr sollen die Besucher*innen die Möglichkeit haben, sich ein Grundwissen anzueignen, eigene Anknüpfungspunkte finden und sich selbst mit dem Spannungsfeld der KI-Technologien auseinandersetzen.

Das Ars Electronica Futurelab hat innerhalb der neuen Ausstellung eine Bildungsreihe für technologisch-orientierte Inhalte entwickelt. Im Mittelpunkt einer Abfolge von didaktischen Stationen steht der Versuch, die grundlegenden Bausteine verschiedener KI-Technologien und deren Funktionsweise zu veranschaulichen. Neural Network Training zeigt anhand vereinfachter Beispiele, wie lange Operationsketten mathematischer Funktionen als sogenannte künstliche neuronale Netze funktionieren. Benannt nach den leitenden Zellen im Nervensystem und inspiriert von diesen, ordnen solche Netze die eingegebenen Daten in Tendenzen oder Wahrscheinlichkeiten und kategorisieren sie. Im Ars Electronica Center können verschiedene Netze sowohl trainiert als auch in ihrer Anwendung beobachtet werden.

Zum Abschluss der Bildungsreihe veranschaulichte das Ars Electronica Futurelab verschiedene Schritte der Kategorisierung eines Convolutional Neural Networks (CNN), einem der Grundbausteine der KI-Technologie und des Deep Learning. In der Trainingsphase wurden in mehreren Filterschichten verschiedene Merkmale von Bildern gelernt, d.h. einfache Linien, Kurven und komplexe Strukturen werden unabhängig voneinander angeordnet und bilden die Grundlage für die Kategorisierung von Bildern. Diese aufeinander aufbauenden Filter werden auf elf großen Displays im Ars Electronica Center visualisiert. Am Ende zeigt ein Prozentdiagramm, wie das KI-System eines der auf der eingebauten Kamera gezeigten Objekte bewertet.

Vector Space stellt alle Beiträge des Prix Ars Electronica seit 1986 über verschiedene Parameter in Beziehung zueinander. Das Modell verwendet mehrere KI-Technologien aus dem Bereich der Text- und Bildanalyse, um das Archiv in einer dreidimensionalen Grafik darzustellen. Bildeigenschaften, Texteigenschaften oder eine Kombination davon bestimmen die Ähnlichkeit der Einträge und positionieren sie in Relation zueinander. Comment AI verwendet ein öffentliches Projekt des Forschungsinstituts für Künstliche Intelligenz OFAI, um eine zukünftige KI-Anwendung im Ars Electronica Center zu trainieren. Besucher*innen können gesammelte Kommentare aus den Online-Foren verschiedener österreichischer Zeitungen klassifizieren und der Anwendung so verschiedene Kategorien – von Neutralität bis Hassrede – beibringen.

Darüber hinaus entwickelte das Ars Electronica Futurelab spielerische Stationen zum Erleben von KI-Technologien in direkter Auseinandersetzung. In Anlehnung an die Arbeit Learning to see: Learning to see: Gloomy Sunday des Künstlers Memo:Akten, der auch in der Ausstellung vertreten ist, wird in zwei Stationen die KI-Technologie aus der Perspektive der Veranlagung zur kognitiven Selbstvergewisserung untersucht. In der Auseinandersetzung mit Phänomenen des Vorurteils, der Verdrängung, der Interpretation von Situationen nach den Mustern vergangener Erfahrungen und Vorverurteilungen folgen interaktive Stationen den Spuren dieser Denkmuster in den Strukturen der KI-Technologie.

Pix2Pix: GANgadse verwandelt die Zeichnungen der Besucher*innen in Katzenbilder. Ein Conditional Generative Adversarial Network (cGAN) hat ausschließlich von Katzenbildern gelernt und interpretiert daher alle eingegebenen Daten als solche. ShadowGAN stellt die Umrisse von Besucher*innen, die von einer Kamera erfasst wurden, als Landschaften dar, da das Netzwerk ausschließlich auf Bildern von solchen Landschaften trainiert wurde.

Die Ausstellung will mit ihren Fragen nach dem Verhältnis zwischen menschlicher und technischer Realität die Techniken und Anwendungen hinter den Konzepten der künstlichen Intelligenz entmystifizieren. Als Trendbegriffe eröffnen sie seit geraumer Zeit unklare Diskussionsräume, die für die einen mehr einer Werbelandschaft, für die anderen mehr einem Gruselkabinett ähneln als einer informierten Debatte. Statt technokratischer Macht- und Schöpfungsphantasien oder technophober Übernahmeszenarien zielen die didaktischen Ausstellungsbeiträge des Ars Electronica Futurelab auf die Vermittlung von Grundwissen und Ansätzen zur autonomen Nutzung von KI-Technologien.

Die Ausstellung im Ars Electronica Center hat aufgrund ihrer Zugänglichkeit und Beliebtheit inzwischen einen Ableger gefunden: Das Ars Electronica Futurelab hat für das Deutsche Museum in Bonn zwei themenbezogene Erlebnisräume der Ausstellung Mission KI gestaltet.

In Understanding AI – Episode 1 erklären Ali Nikrang, Peter Freudling und Stefan Mittlböck-Jungwirth-Fohringer des Ars Electronica Futurelab, wie die Besucher*innen KI anhand der Installationen im Ars Electronica Center erleben können.

Credits

Ars Electronica Futurelab: Peter Freudling, Stefan Mittlböck-Jungwirth-Fohringer, Ali Nikrang, Arno Deutschbauer, Horst Hörtner, Gerfried Stocker, Erwin Reitböck, Susanne Teufelauer