Das Tracking System pharus wurde im Ars Electronica Futurelab von Forscher und Künstler Otto Naderer entwickelt, um einer beliebig großen Gruppe von Personen in nahezu jeder Umgebung Interaktionsmöglichkeiten zu bieten.

Die Besonderheit des skalierbaren Systems liegt in dessen Benutzerfreundlichkeit: Um von pharus erkannt zu werden, sind weder Marker noch andere Geräte erforderlich – Personen werden bereits durch ihr Betreten des kontrollierten Bereiches erfasst. pharus wird damit unterschiedlichsten Anforderungen gerecht; sei es für die Entwicklung komplexer Programme im Deep Space 8K des Ars Electronica Center, partizipative Kunstperformances oder auch die Überwachung von Fließbändern. So ist pharus auch für Partner*innen des Ars Electronica Futurelab vielfältig einsetzbar.

Bereits seit den späten 70er-Jahren konzentrieren sich Expert*innen unter dem Begriff “Human Computer Interaction” (HCI) auf die Entwicklung verbesserter Interaktionsmöglichkeiten zwischen Mensch und Maschine. Zusehends verlagert sich der Fokus der Forschenden jedoch von der Entwicklung von Schnittstellen für einzelne Personen hin zu großen Gruppen von Menschen: „Crowd Computer Interaction“ ist ein spezifischer Teilbereich, der die HCI-Forschung ab 2009 prägt. Auf unkonventionelle und intuitive Formen der Mensch-Maschine-Interaktion konzentriert sich deshalb auch das Forschungsprojekt CADET (Center for Advances in Digital Entertainment Technologies) des Ars Electronica Futurelab und der Fachhochschule Salzburg von 2010 bis 2014. Man forscht an Schnittstellen und Anwendungen, die von mehreren Personen gesteuert werden können – zum Beispiel auf Basis von Bewegungsdaten.

Um die Position eines sich bewegendenden Subjekts im Raum bestimmen und Bewegungsmuster erkennen zu können, wurde im Ars Electronica Futurelab ein lokales Positionierungssystem entwickelt. Die Anforderungen an den Mechanismus waren denkbar hoch: Neben seiner Unempfindlichkeit gegenüber einer massiven Belastung durch große Personengruppen musste es gleichzeitig einen entsprechend großen Interaktionsraum erfassen und dennoch präzise funktionieren.

Das Tracking-System, das von Künstler und Forscher Otto Naderer zu diesem Zweck entwickelt wurde, nutzt Laserscanner (2D-planare LiDARs), die rund um eine Interaktionsfläche in Knöchelhohe angebracht sind. Bereits der erste Praxistest konnte das enorme Potenzial des Systems zeigen: Die vielen Perspektiven, die von den Sensoren erzeugt werden, vermindern die Auswirkungen von gegenseitiger Abschattung im Raum. Getestet und verfeinert wurde das System im Deep Space 8K des Ars Electronica Center, wo sich bis zu 30 Personen simultan in einem skalierbaren Interaktionsraum bewegen. pharus war konzipiert!

Durch jahrelange Entwicklungsarbeit hat sich pharus (lat.: ‚Leuchtturm‘) zu einem sehr vielseitigen Tracking-System entfaltet, das beliebig viele Personen und Objekte sogar in überfüllten Umgebungen über weite Strecken hinweg verfolgt. Das vielseitige System konnte bereits die unterschiedlichsten Problemstellungen bewältigen.

Wie Personen-Tracking mit pharus funktioniert

Je nach Anwendungsfall baut das skalierbare Tracking-System auf weniger oder mehr 2D-Laserentfernungsmessern auf. Die Sensoren verfügen in der Regel über einen rotierenden Kopf, der mit einer Infrarot-Laserquelle und einer Fotodiode ausgestattet ist. Durch die Messung der Laufzeit – vom Aussenden eines Laserpulses bis zu seinem Empfang an der Diode – kann die Distanz zum reflektierenden Objekt berechnet werden. Diese Ähnlichkeit mit Radargeräten hat den Begriff „LiDAR“ (Light Detection and Ranging) geprägt. Das Messgerät wird auf einer drehbaren Basis montiert; der Sensor zeichnet die Kontur seiner Umgebung auf.

Über die pharus-Verarbeitungspipeline werden diese Konturen jedes der angeschlossenen Sensoren gesammelt. Die Messdaten aller Sensoren, auch “Echos” genannt, werden überlagert und ausgewertet. In einem wesentlichen Teil dieses Prozesses werden Nebeneffekte der gegenseitigen Abschirmung kompensiert: sollten ein oder mehrere Sensoren die Sichtlinie verloren haben, können andere die zu verfolgende Person oder das zu verfolgende Objekt „übernehmen“. Selbst wenn alle Sensoren das zu verfolgende Objekt verlieren sollten, kann pharus eine gewisse Zeit lang den Kurs der Bewegung vorhersagen und damit Ausfälle kompensieren.

pharus im Deep Space 8K

Im Deep Space 8K des Ars Electronica Center wird pharus für sehr viele unterschiedliche Anwendungen genutzt:

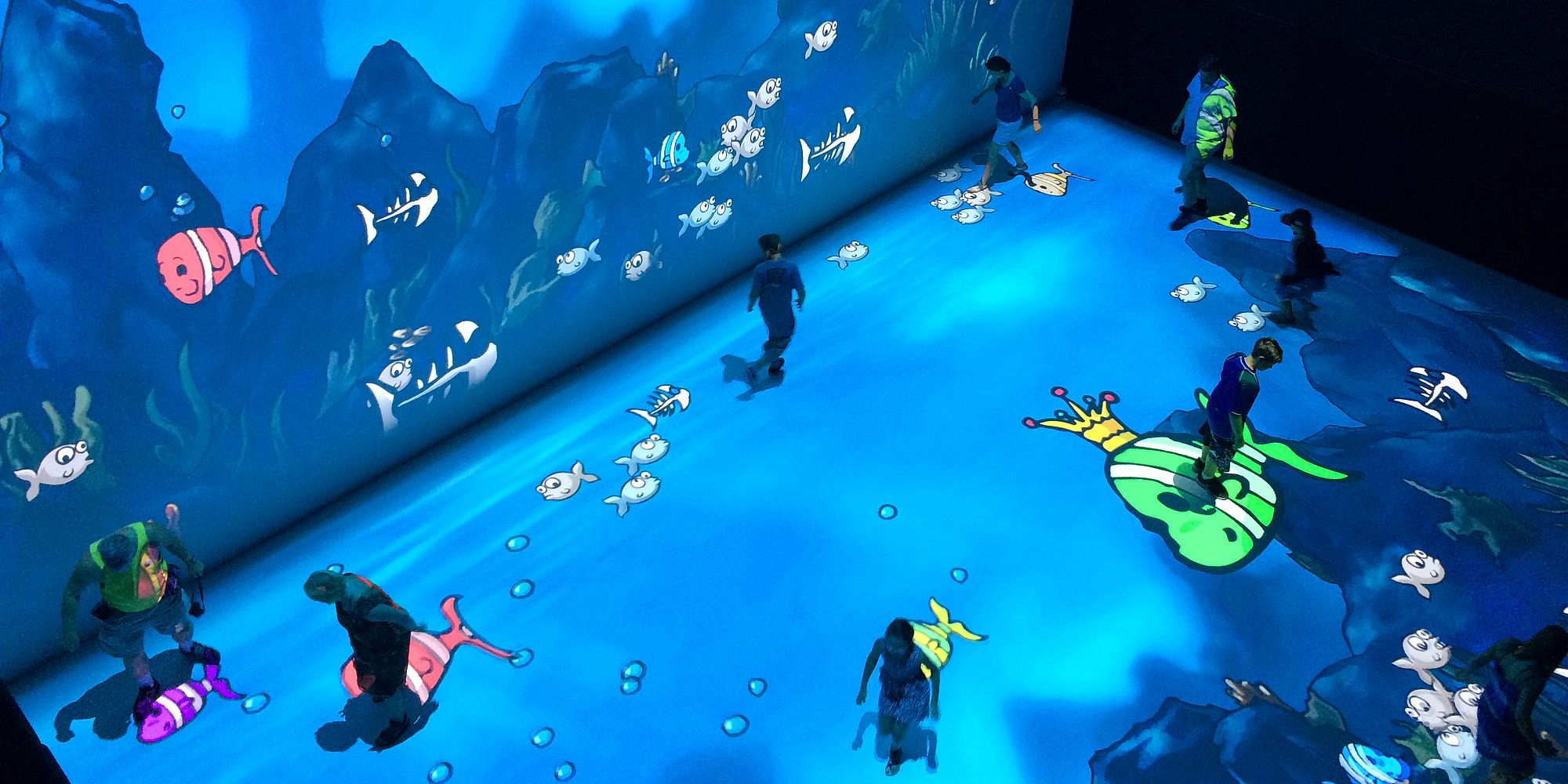

Die Game Changer Suite ist eine Sammlung von Spielen, die sich für die Teilnahme mehrerer Personen eignet. Mit vollem Körpereinsatz können die Spielenden – repräsentiert durch ihre virtuellen Avatare – in den abenteuerlichsten Szenarien gegeneinander antreten. Ursprünglich von Studierenden der FH OÖ Campus Hagenberg als temporäre Installation für das Ars Electronica Festival 2014 entwickelt, ist die Game Changer Suite seitdem Teil des interaktiven Deep-Space-8K-Programms. Sie lädt Besucher*innen dazu ein, aus einer Reihe von rasanten und agilen Minispielen zu wählen, um selbst zu erfahren, welche Möglichkeiten das System bietet, und wie es sich anfühlt, digital “getrackt” zu sein.

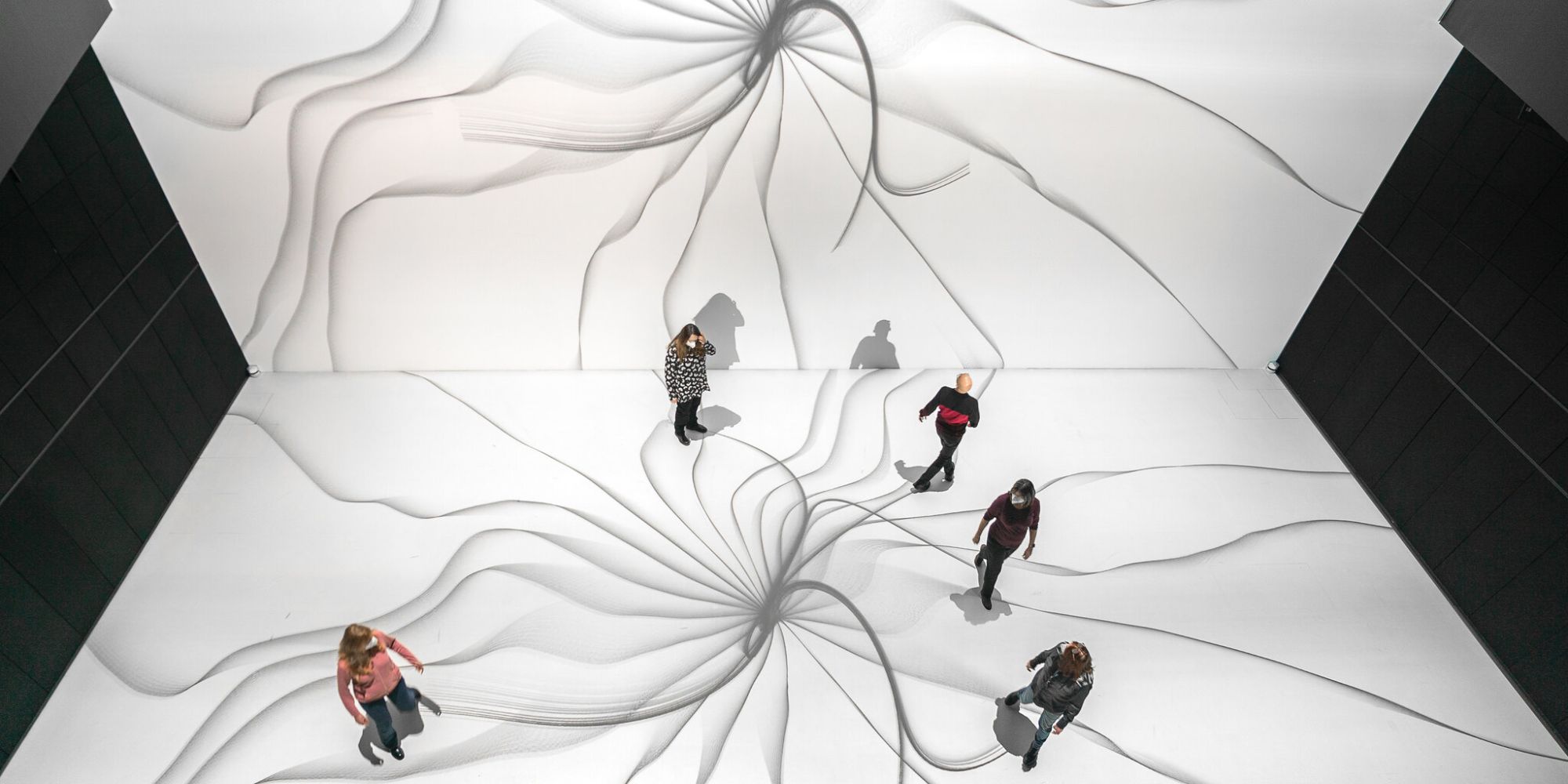

Basierend auf dem von Univ.-Prof. Dr. Gerhard Funk entwickelten Konzept der “Kooperativen Ästhetik” haben auch Studierende der “Zeitbasierten und Interaktiven Medienkunst” an der Kunstuniversität Linz seit 2015 gemeinsam mit Prof. Gerhard Funk 30 Kunstwerke für den Deep Space des Ars Electronica Center geschaffen. Diese Projekte wurden im Rahmen einer Ars Electronica Home Delivery Reihe präsentiert. Mit Hilfe des Lasertracking-Systems pharus und in Verbindung mit der riesigen Projektionsumgebung im Deep Space 8K ermöglichen die Arbeiten der Studierenden den Besucher*innen eine kollektive, audiovisuelle, ästhetische Erfahrung.

pharus – Im Auftrag von Wirtschaft, Kunst und Kultur

Das Einsatzspektrum von pharus ist denkbar vielfältig und ist längst nicht auf den Deep Space 8K und das Ars Electronica Center beschränkt:

In einer Gebäudebrücke, die zwei Hauptgebäude des SAP Campus in Deutschland verbindet, wurde damit die interaktive Klanginstallation Building Bridges installiert. Hier werden die Bewegungen von Passant*innen mit einem kompositorischen Algorithmus vom System in Musik übersetzt. Die Brücke dient gleichzeitig als Bühne und als Musikinstrument. Indem Passanten nichts weiter tun, als sie zu überqueren, können sie selbst Musik komponieren. Gemeinsam mit dem Komponisten Rupert Huber hat das Ars Electronica Futurelab das Projekt 2013 am SAP Campus in Walldorf (DE) realisiert.

Im Spannungsfeld zwischen Kontrolle und Selbstbestimmung im Zeitalter der digitalen Überwachung stellt sich hingegen die Performance SystemFailed unter Anwendung von pharus-Tracking aus dem Ars Electronica Futurelab die Frage nach unserer Handlungsfähigkeit gegenüber einem scheinbar übermächtigen digitalen System: Gemeinsam mit dem Publikum untersucht das Künstler*innen-Kollektiv ArtesMobiles mit seiner performativen Inszenierung das Machtgefüge in einer zunehmend digitalisierten Welt. Eine KI wird so zur Protagonistin im Spiel, die im Verlauf von SystemFailed Bewegungsdaten aller Proband*innen sammelt und sauber sortiert. Algorithmisch gesteuerte Systeme werden auf spielerische Art hinterfragt und schon bald machen die Teilnehmenden eine interessante Selbsterfahrung: Werden sie sich anpassen? Oder werden sie rebellieren?

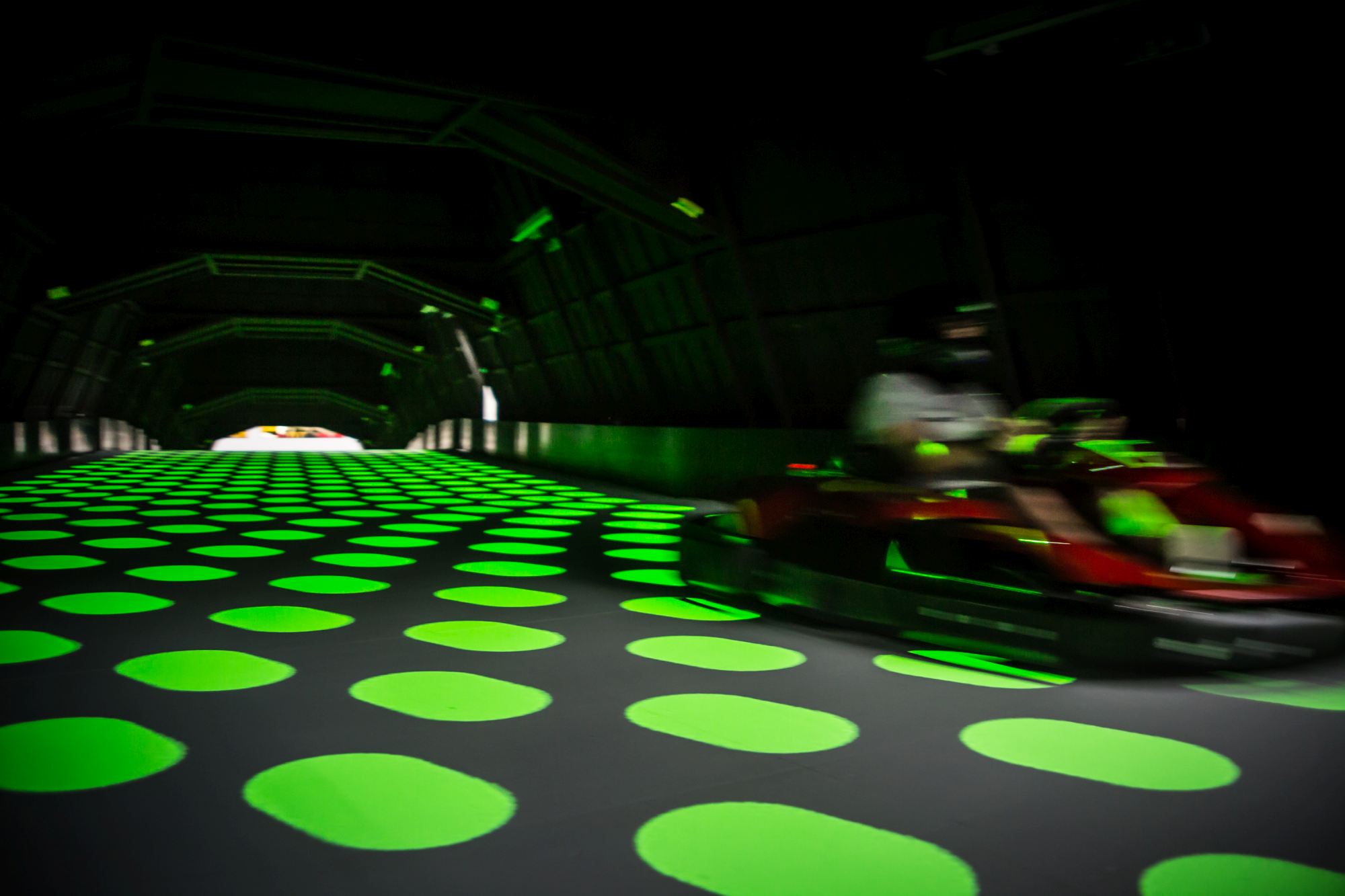

Neue Wege beschreitet pharus auch auf dem E-Kart-Erlebnisparcous Rotax MAXDome. Das zentrale Element der Rennstrecke ist ein 50 Meter langer Tunnel mit Bodenprojektion. Während ihrer rasanten Fahrt können Rennfahrer*innen zusätzliche “Boosts” zu sammeln, indem sie Herausforderungen bewältigen. Die schnellen und wendigen Karts erfordern ein hochpräzises und sehr reaktionsschnelles Tracking-System. Auch hier konnte pharus den Anforderungen gerecht werden. In diesem Setup arbeitet es mit einem groben funkbasierten Positionierungssystem zur Identifizierung und bietet eine hohe Genauigkeit und Scan-Rate.

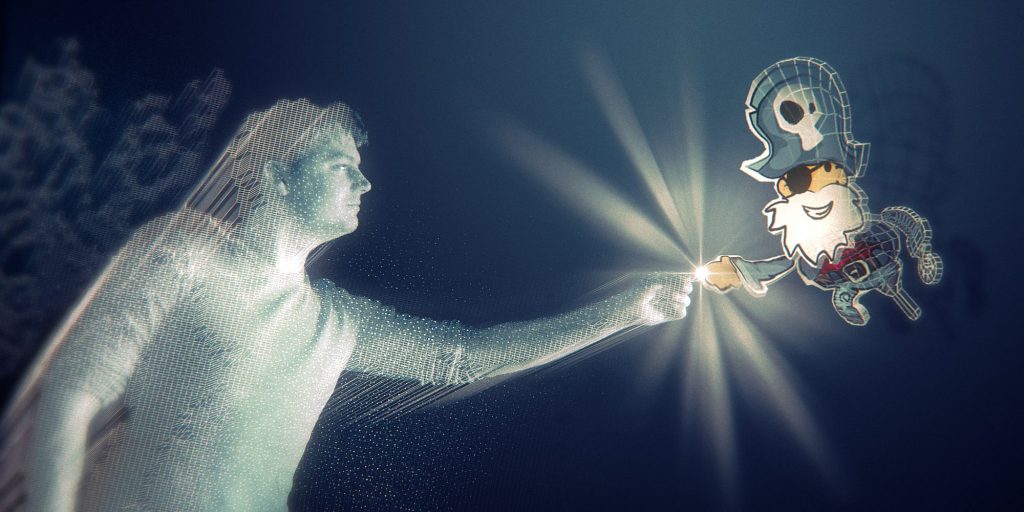

Auch der australische Medienkünstler Daniel Crooks trat im Rahmen seiner Artist Residency 2014 mit einer sehr anspruchsvollen Herausforderung an das Ars Electronica Futurelab heran: In einem „Scan-Raum“ sollte aus der Bewegung einer Person eine rein virtuelle Skulptur entstehen: seine künstlerische Arbeit dreht sich um die Behandlung von Zeit als physischem Material. Mit Real Imaginary Objects befasste sich das Ars Electronica Futurelab mit der Entwicklung dieses Raums: Mit Hilfe von vier Sensoren wurde ein Object Slicer entwickelt; ein pharus, das den Querschnitt eines erkannten Körpers berechnet und dazu in der Lage ist, ihn in Echtzeit zu 3D-Modellen zu stapeln. Die Ergebnisse dieses künstlerischen Forschungsprojekts wurden bereits an zahlreichen Orten auf der ganzen Welt gezeigt.

pharus – Auf dem Weg in eine mobile Zukunft

Aktuell wird pharus’ Eignung für den Einsatz auf mobilen Plattformen geprüft: Um via Laser-Tracking ein besseres Verständnis ihrer Umgebung zu erlagen, könnten autonome Fahrzeuge mit besonders kompakten Sensoren ausgestattet werden. Um die Erkennung bzw. Unterscheidung von Personen und Hindernissen im Sinne der Fahrwegplanung und Sicherheit zu unterstützen, wird pharus auch für das Forschungsprojekt Cobot Studio genutzt, wo daran gearbeitet wird, die Koexistenz von Mensch und Maschine zu optimieren.

Weitere Potenziale ergeben sich auch für die Schwarmforschung: Mit pharus ausgestattete Bots wären in der Lage, benachbarte Vehikel zu erkennen und zu verorten. Dies ermöglicht Rückschlüsse auf die eigene Position innerhalb des Schwarms und stützt damit Genauigkeit und Ausfallsicherheit des Positionierungssystems am Bot.

Credits

Forschung & Entwicklung: Otto Naderer