The first AI x Music Festival, organized by Ars Electronica and the European Commission as part of the STARTS initiative, is dedicated to the encounter between human creativity and technical perfection. From September 6 to 8, 2019, Ars Electronica will be gathering musicians, composers, cultural historians, technologists, scientists and AI developers from all over the world in Linz to discuss the interaction between human and machines through concerts and performances, conferences, workshops and exhibitions.

Overview

Renowned personalities from the world of art, such as Hermann Nitsch (AT), Christian Fennesz (AT), Markus Poschner (DE), Dennis Russell Davies (US/AT), Maki Namekawa (JP/AT), Memo Akten (TR), Anthony Moore (UK/FR), and Sophie Wennerscheid (DE), and from the world of science, such as Josef Penninger (AT), Siegfried Zielinski (DE), and Ludger Brümmer (DE) will be present. Other participants include personalities such as Matthias Röder (DE) from the Karajan Institute, the author, theologian, editor, filmmaker, and presenter Renata Schmidtkunz (AT), and Amanda Cox (US) from the New York Times’s data journalism section “The Upshot.” In addition, there will be internationally leading developers from the Yamaha R&D Division AI Group and the Glenn Gould Foundation, from Google’s Magenta Studio, SonyLab, IRCAM, or the Nokia Bell Labs, as well as from various start-ups.

The venues of the “AI x Music Festival” are the Anton Bruckner Private University, the Ars Electronica Center, the Linz Donaupark, POSTCITY, and the St. Florian Monastery. The latter is the undisputed hotspot of the AI x Music Festival: Whether it is the marble hall, church, crypt, or tomb—the impressive rooms of this spiritual site are a perfect context for reflecting on the future role of intelligent machines and our self-image as human beings.

Program

Friday, September 6, 2019 / POSTCITY, Ars Electronica Center

Workshops

The AI x Music Festival will start with a series of workshops at POSTCITY. Reeps One (UK) from Nokia Bell Labs will place a focus on disruptive research for the next phase in human history, Jérôme Nika (FR) is working with IRCAM researchers and will be reflecting on the role of human-machine interaction in the context of music, and Daniele Ghisi (IT) will be hosting a workshop titled “La machine des monstres.” Computer music designer, musician, and researcher Koray Tahiroğlu (Fl/TR) will present tools for real-time performances of digital music developed at Aalto University. Within the framework of the STARTS program, which is also jointly organized with the European Commission, there will be further lectures dedicated to the interweaving of AI and music.

Performance

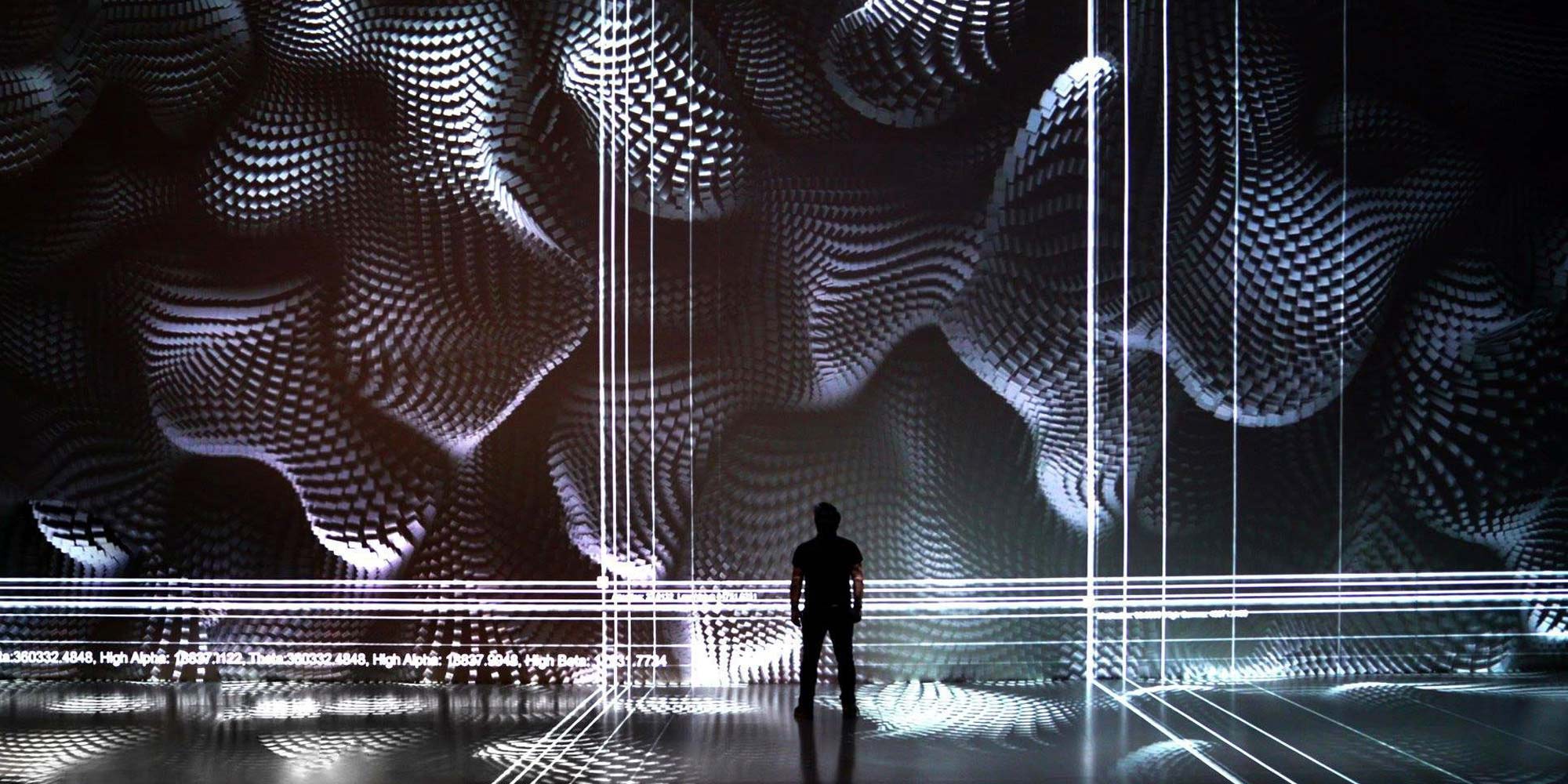

In the Ars Electronica Center’s Deep Space, pianist Kaoru Tashiro (JP) and digital visual artist OUCHHH (TR) will present a performance that fuses music and digital images into a fascinating live performance.

Big Concert Night

In the evening, the “Big Concert Night” of this year’s Ars Electronica will also be the opening concert of the “AI X Music Festival” (and will therefore, for once, take place on Friday rather than Sunday!). “Mahler-Unfinished – Music meets AI” is the title of the ambitious concert in the POSTCITY’s spectacular hall, which will be an encounter between orchestral music and electronic music, human and robotic dancers, and artificial intelligence. Christian Fennesz (AT) will kick the event off with “Mahler Remixed.” The stylistically influential proponent of electronic music in Austria will use samples from Mahler symphonies for his live performance; the visuals will be added by Lillevan (DE). Towards the end of this session, the pianist Markus Poschner (DE) will join in and will create a bridge between electronic and orchestral music through his improvisations. Under the conductor Poschner, the Bruckner Orchestra Linz will then perform Mahler’s Unfinished Symphony No. 10. “Unfinished”—a word that always resonates with the challenge to think ahead and reinterpret. Not to imitate or even improve Mahler, but to test new forms of expression with today’s artistic approaches and technical possibilities. “Mahler Unfinished” was therefore also the creative starting point for Ali Nikrang (IR/AT), pianist, composer, computer scientist, AI developer, and researcher at the Ars Electronica Futurelab. With MuseNet, currently OpenAI’s most powerful AI system for musical applications, Nikrang has reworked the significant viola theme at the beginning of Mahler’s 10th Symphony. Only the first ten notes of the original melody, along with a few stylistic parameters, were entered into the system. The outputs included countless new interpretations, one of which Nikrang selected and then orchestrated by hand. The result will be performed by the Bruckner Orchestra Linz during the “Big Concert Night.” As on the last Big Concert Night, on this occasion, Silke Grabinger (AT) will also be present: Using motion tracking, her dance will be transferred to industrial robots, which in turn will make a man-sized puppet dance like a marionette. With “Alive Painting,” Akiko Nakayama (JP) will also contribute an impressive real-time visualization. Last but not least, with his piece “Sonic Robots,” Moritz Simon Geist (DE) will deliver a stirring performance with robotic instruments of his own construction. After this, the Ars Electronica Nightline will begin.

Saturday, September 7, 2019 / Anton Bruckner Private University, St. Florian Monastery

The second day of the AI x Music Festival will start with “Sonic Saturday – Medium Sonorum,” curated by Volkmar Klien (AT) and Andreas Weixler (AT) at the Anton Bruckner Private University. First, Tobias Leibetseder (AT) & Astrid Schwarz (AT), Tania Rubio (MX), and Erik Nyström (SE) will perform; after the break Kaori Nishii (JP) and Angélica Castelló (MX/AT) will stage their performance “Luc Ferrari.”

Lectures, talks, demos, concerts

At noon, the AI x Music Festival will move to the St. Florian Monastery. Throughout the afternoon and evening, moderated lectures, talks, demonstrations, and concerts will be on the program.

To start, Hermann Nitsch (AT) will be giving an organ concert, and he won’t be playing just any instrument; the Bruckner organ of the St. Florian Basilica is considered to be one of the most magnificent in all of Europe.

The first panel discussion, with notable participants, will start immediately afterwards: In conversation with Renata Schmidtkunz (AT), Josef Penninger (AT) and Sophie Wennerscheid (DE) will address the role of science and research, which initially had to confirm a religious view of the world, were then subordinated to economic rationality and now, in the dawning age of the AI, are reorienting themselves. Cellist Yishu Jiang (AT) will take this up with a performance and ask what preconditions and strategies would be necessary for a reflective social discourse on AI.

The next session will deal with AI applications that open up new artistic possibilities. In conversation with Renata Schmidtkunz, François Pachet (FR) and Markus Poschner (DE) will discuss the associated effects, such as new business models or (copyright) legal regulations. Immediately afterwards, Weiping Lin (TW) will play the violin.

The next star-studded panel will continue in this vein: Walter Ötsch (AT) and Marta Peirano (ES) will talk about the social acceptance of current AI research.

The next highlight will be “Calculated Sensations,” an “expanded lecture” with Siegfried Zielinski (DE), Europe’s leading expert on media archaeology and the cultural history of machines, and Anthony Moore (UK), British experimental musician, composer, producer, and co-writer of Pink Floyd songs. The two will invite you on a journey through four millennia of music history. In seven different locations of the St. Florian Monastery, one main theme will be addressed on each occasion—with short texts, experimental sounds, and dialogue.

To follow on, we will then have four “Conversations on AI” and two “Reports and Proceedings”: Vladan Joler (RS) and Vuk Ćosić (SI) will discuss the relationship between the humanities and artificial intelligence in the past and present, while Aza Raskin (US) and Maja Smrekar (SI) will deal with the parallels and similarities between artistic practices in AI and life art. Markus Poschner (DE) and Ali Nikrang (IR/AT) will deal with the issues of composition, interpretation, reproduction, and reception. Marta Peirano (ES) will ask about the tension between information and disinformation in the age of AI, Clara Blume (AT) and Naut Humon (US) will offer insights into the AI & music scene in the Bay Area, and Lynn Hughes (CA) will ask whether the soundtracks of current AI systems meet the demands of the gaming industry.

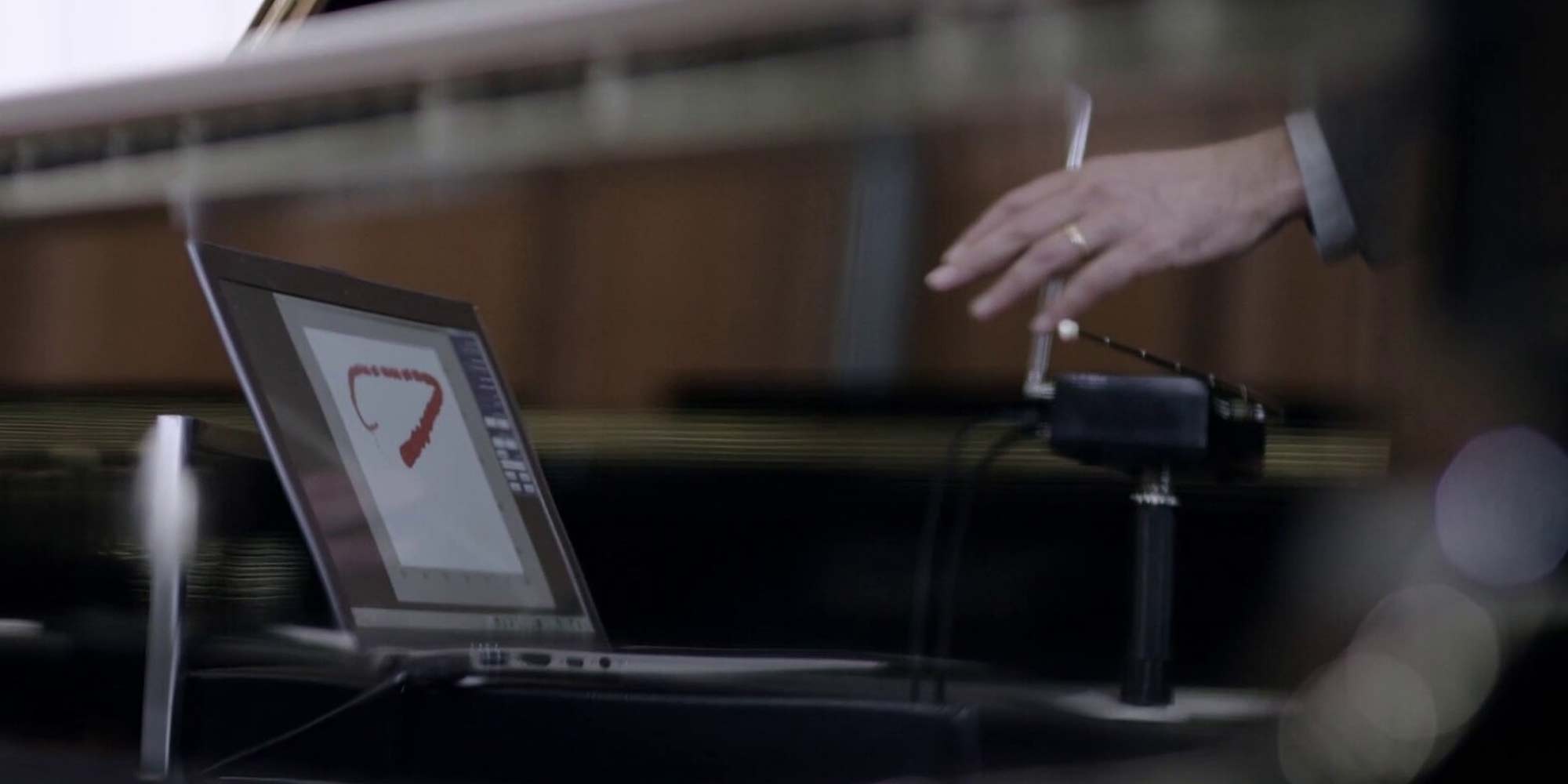

The next sessions will consist of talks and performances, all of which will deal with current AI applications. First, Dennis Russell Davies (US/AT), Maki Namekawa (JP/AT), and Francesco Tristano (LU) will discuss the tension between originality and authenticity when evaluating AI-generated music. Akira Maezawa (JP), and Brian M. Levine (CA) will then present a research project by Yamaha and the Glenn Gould Foundation, in which an AI system has been trained to exactly emulate Glenn Gould’s interpretation style. Markus Poschner (DE) and Norbert Trawöger (AT) will discuss machine learning applications that are based on statistical methods but whose results still seem spontaneous and original to us. Ali Nikrang (IR/AT) will take this up and show how he has reworked the viola theme of Mahler’s 10th Symphony using OpenAI’s MuseNet.

The next program block consists of performances and demonstrations around the AI-supported creation of music: Tomomi Adachi (JP) will use a performance AI called “Tomomibot,” which learns from musical improvisations to interact live with a human voice improviser. Muku Kobayashi (JP) and Mitsuru Tokisato aka SHOJIKI (JP) represent the completely analog opposite pole and improvise with nothing more than … adhesive strips! Based on the performance “Ultrachunk” Memo Akten (TR) will deal with the role of AI-applications regarding improvisation. The organist Klaus Sonnleitner (AT) invites you to a concert where he’ll be improvising on the organ in the spirit of Anton Bruckner; then, Roberto Paci Dalò (IT) will be playing the (bass) clarinet and working with the very special acoustics of the St. Florian Marble Hall. Rupert Huber (AT) will be taking his seat at the piano and also improvising.

Another program block of the AI x Music Festival entails demos of current AI applications. Ali Nikrang (IR/AT) will discuss historical and current AI systems for the generation of music—he examines what makes musical data special and why it is a great challenge to compose music using AI applications. Thomas Grill (AT) and Martina Claussen (AT) will conduct numerous experiments to improve human-machine communication. Vittorio Loreto (IT) will demonstrate how music and AI merge with Sony CSL to create something new; the musicians of Ensemble Vivante (AT) will presents the dramatically-charged vocal music of Monteverdi.

The next block will focus on presentations and discussions on current AI research activities. Ludger Brümmer (DE) will talk about transdisciplinary research at the Hertz Lab of the ZKM, while François Pachet (FR) will explain his research on “flow machines” like SKYGGE.

We will continue with a series of workshops. Memo Akten (TR) will show how generative artificial neuronal networks (GANS) can be used as a medium for creative expression and storytelling. Finally, with the project “Anatomy of an AI System,” Vladan Joler (RS) from SHARE Lab will present a meticulous compilation of all the human, data, and natural resources required to build and operate an Amazon Echo.

Evening Concert

On Saturday evening, the AI x Music Festival is inviting you to a journey through time from the beginnings of music history to the here and now. Composer and organist Wolfgang Mitterer (AT) will demonstrate the power and effect human actors can unleash on stage, even in times of increasing digitalization. Quadrature (DE), by contrast, receive signals from space, which are interpreted by an AI system and transmitted to a robotic keyboard made of electromagnets that ultimately makes the organ play. The experts from the Yamaha R&D Division AI Group, the Glenn Gould Foundation, Francesco Tristano (LU) and musicians of the Bruckner Orchestra will contribute an AI-based performance. The crowning finale of the evening will be “Heavy Requiem – Buddhist Chant: Shomyo + Electronics.” Keiichiro Shibuya (JP), Eizen Fujiwara (JP), and Justine Emard (FR) will fuse traditional Buddhist music with electronic sounds.

Exhibitions

A number of art projects will also be presented in the premises of the monastery: With his installation “Anschwellen – Abschwellen,” Volkmar Klien (AT) explores the question of what makes a machine seem autonomous, reactive, and intelligent. The “Akusmonium” is a unique loudspeaker orchestra for the interpretation of computer-generated music and the creation of ephemeral, dynamically moving sound sculptures. In this way, Thomas Gorbach (AT) invites the audience on a journey from the history of electronic sounds through digital sound generation to AI-based compositions. Stefan Tiefengraber’s (AT) “WM_EX10 TCM_200DV TP-VS500 MS-201 BK26 MG10 [INSTALLATION/PERFORMANCE]” is an installation and performance in which unexpected and uncontrollable analog signals are altered and “bent” to create an audio/video noise image that in turn creates a time-based sculpture. With “Soundform No.1” Yasuaki Kakehi (JP), Mikhail Mansion (US), Kuan-Ju Wu (US) form a minimalist sound landscape and kinetic art installation of warmth, light, and movement.

Sunday, September 8, 2019 / Donaupark, POSTCITY

Talks

At POSTCITY, Roberto Viola (IT) of the European Commission will open a whole series of talks with his statement. Christine Bauer (AT) from JKZ and Peter Knees (AT) from the TU Vienna conduct research on “music information retrieval” and will give an introduction to the field and to the current state of technology in music information retrieval. Philippe Esling (FR), Jérôme Nika (FR), and Daniele Ghisi (IT) will talk about their research at the Institut de Recherche et Coordination Acoustique/Musique at the Centre Pompidou in Paris (IRCAM), which focus on the power and limits of artificial neural networks. Jérôme Nika (FR), by contrast, focuses on the introduction of authoring, composition, and control in human-computer music co-improvisation. This work led to numerous collaborations and musical productions, particularly in improvised music (Steve Lehman, Bernard Lubat, Benoît Delbecq, Rémi Fox) and contemporary music (Pascal Dusapin, Marta Gentilucci). Then, we’ll hear from Nick Bryan Kinns (UK) from Queen Mary University, Koray Tahiroglu (FI) from Aalto University, Ludger Brümmer (DE) from ZKM. Next up is a music industry application-oriented research on AI with François Pachet (FR) from Spotify, Vittorio Loreto (IT) form Sony CSL Paris and Akira Maezawa (JP) from Yamaha. The final presenters will be the start-ups Amadeus Code, Endel, Fortunes, and Music Traveller.

Workshops

At the same time as the talks, there will be workshops with Ali Nikrang (IR/AT) from the Ars Electronica Futurelab, Alex Braga (IT) from “A-MINT,” and Phillipe Esling (FR) from IRCAM as well as from Gerald Wirth (AT) from the Vienna Boys Choir and Vive Kumar (IN) from Atabasca University.

Episode by the River

On Sunday evening the AI x Music Festival will take place in the Donaupark. Designed as an “Episode by the River,” the Ars Electronica, Bruckner Orchestra, and Brucknerhaus will be staging an homage to the very first “Klangwolke.” As in 1979, the starting point for this sound journey will be the orchestra concert in the Great Hall of the Brucknerhaus, which will not only be transmitted to the outside world via the powerful sound system of the Klangwolke, but will also provide the sound material for Wolfgang “Fadi” Dorninger (AT), Ali Nikrang (IR/AT), Roberto Paci Dalò (IT), Rupert Huber (AT), Markus Poschner (DE), Sam Auinger (AT) and Fennesz (AT) & Lillevan (DE), who will create new acoustic, analog, and digital sound spaces in the Donaupark based on it.

Exhibitions during the entire AI x Music Festival

In addition to the concerts, performances, conferences, lectures, panels, and workshops, the entire AI x Music Festival can also be experienced through a series of exhibitions and presentations. Founders, CEOs of leading companies, counter culture protagonists, scientists, and artists will be presenting their products, prototypes, and projects at POSTCITY.

EXHIBITION / ART

Domhnaill Hernon (IE), head of Nokia Bell Labs and artist and beatboxer Reeps One (UK) make it clear that, contrary to the public debate, the development of AI systems is not about replacing people but about complementing and supporting them in a meaningful way—above all in the creative industries.

Alex Braga’s (IT) “A-MINT” is a new kind of adaptive artificial musical intelligence that, for the first time, is able to crack the improvisational code of all musicians in real time and improvise with them—music and videos are created during the performance without preset patterns, pitch, or BPM.

The recently reopened Ars Electronica Center, in turn, will be showing an entire exhibition on the subject. “AI x Music” traces the history of music and thus that of the instruments, tools, and apparatus used for its performance, recording, and reproduction. The show spans an arc from the first string and wind instruments of antiquity to today’s digital synthesizers, from the wax rollers and soot-covered glass plates of the first precursors of the gramophone to the digital streaming services of the internet. All of this ultimately leads to AI and machine learning and again to new possibilities for creative design that artists all over the world are already using. “AI x Music” makes it clear that this is not just about technological phenomena, but about fundamental questions of the relationship between man and machine.

EXHIBITION / INDUSTRY, start-ups and established companies

With “Music Traveler,” Dominik Joelsohn (DE/AT), Aleksey Igudesman (DE/AT), and Julia Rhee (KR/US) will be presenting a peer-to-peer platform that helps musicians find and book the next available rehearsal room, recording studio, and concert hall quickly and easily.

With his “Amadeus Code,” Taishi Fukuyama (JP) will be presenting an AI-based assistant for songwriting. The mobile app offers almost unlimited inspiration for topline melodies via different chord sequences and creates sketches of new music compositions.

With “Endel,” Oleg Stavitsky (RU) will be presenting a technology that creates personalized sounds to reduce stress, increase focus, and improve sleep. All sounds are generated in real time and influenced by location, time, heart rate, and cadence, which in turn are recorded on the smartphone.

“0W1 Audio” by Jean Beauve (FR) is a music startup that develops IoT audio platforms that deliver incredibly natural sounds.

EXHIBITION / RESEARCH

Mathias Röder (DE) and the Karajan Institute are constantly pushing the boundaries of new technologies in the creation, dissemination, and reception of music. As part of the AI x Music Festival, he will be reporting on the “AI and Music Hackathon” he initiated for young creatives and tech freaks.

“Computers that Learn to Listen” is a video showing some of the results of scientific research on AI and music conducted at the Institute for Computational Perception of the Johannes Kepler University Linz, directed by Gerhard Widmer (AT). Based on the latest advances in machine learning, computers learn to “listen to” and “understand” music, to recognize beat and rhythm, and to immediately identify pieces of music from a few played notes.

Koray Tahiroglu (FI/TR) of Aalto University presents “NOISA” (Network of Intelligent Sonic Agents), an AI-based interactive music system whose sound agents support music performances. Each sound agent has a machine learning model for predicting and adjusting its performance behavior.